What is Service Mesh?

Introduction

Having a business application is not enough to enjoy seamless operations and end-to-end governed workflow. One has to confirm the secure data transfer. Service mesh is what will make it happen. Starting from supporting inter-service communication to enhancing application security, this piece of technology has a lot to offer.

Not sure what it is how it works and how to use it for your benefit? All such questions are answered well next.

Service Mesh Definition

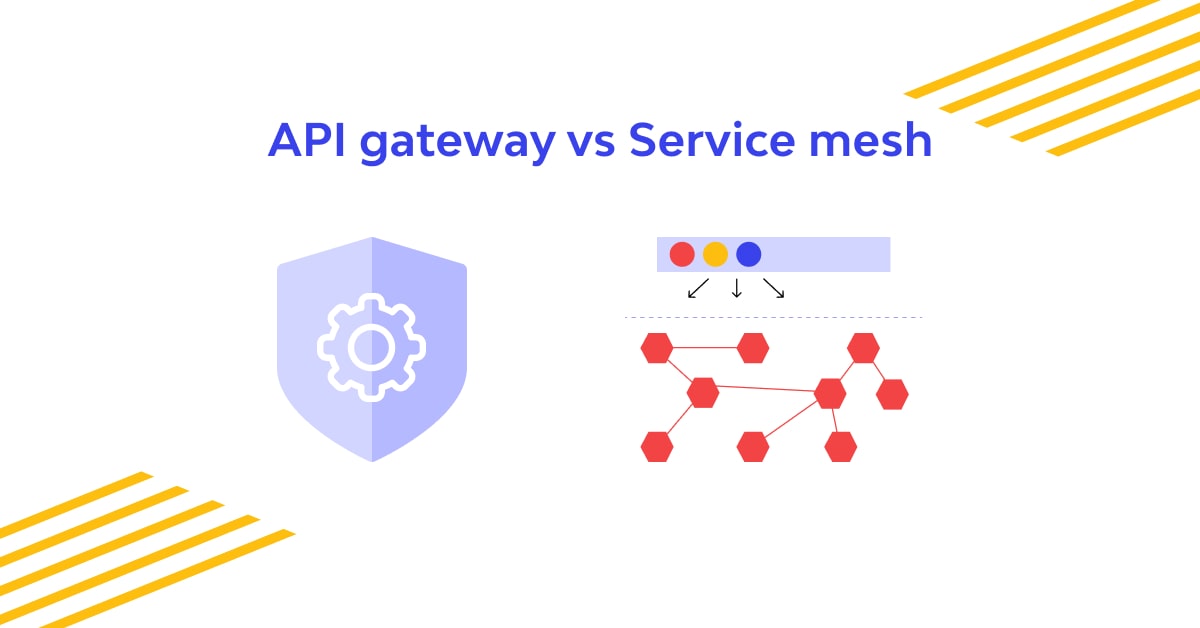

On a structural level, it refers to network proxies collection. The used proxies are arranged in a series pattern. Here, the service mesh operability and its management is in the hands of the application code.

On a functional level, it is the feature applied on the application's platform layer and is accountable for enhancing the scalability, reliability, and security of the targeted application.

Detailing on the Service Mesh Further

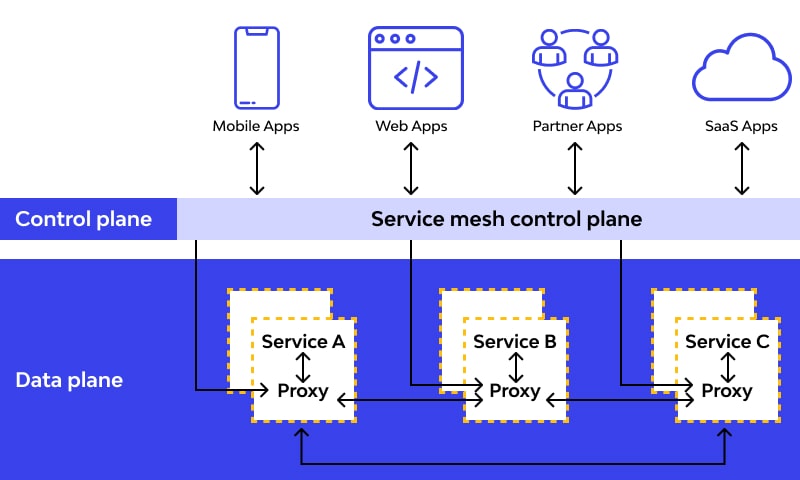

The employed proxies are commonly referred to as the data plane. The job of intercepting the calls exchanged between services and actions related to the calls is handled by it.

The management aspect is the control plane. It handles the assignment of overseeing the proxy manners and proffer need-based APIs to the developers. In addition, it maintains a balance between the application operators and governs it. These two together form the service mesh architecture.

Since cloud-native apps have become a trend, service meshes are making high waves in the industry as they have materialized as a favorable means to boost application security.

During the cloud-extensive application development, it’s evident to compromise multiple service instances, managed by Kubernetes. As the service instances of cloud-native applications are dynamically scheduled on physical nodes and are altering their actual state constantly, service-to-service information exchange becomes highly complex and tedious. Not only this; it influences the overall application runtime and behavior as well.

It’s acknowledged globally that the launch of this technology has made service-to-service communication easier than before. Additionally, it tends to have a positive impact on the overall cloud-based application performance.

Here are some of the most famed service mesh examples around us:

- AWS App Mesh is based on the Envoy proxy

- Azure Service Fabric is an open-source service mesh

- Google Cloud Istio is the packaged edition of Istio and is delivered with Google Kubernetes Engine. Istio is the first-ever designed solution for the Kubernetes-native solution.

How does a Service Mesh work?

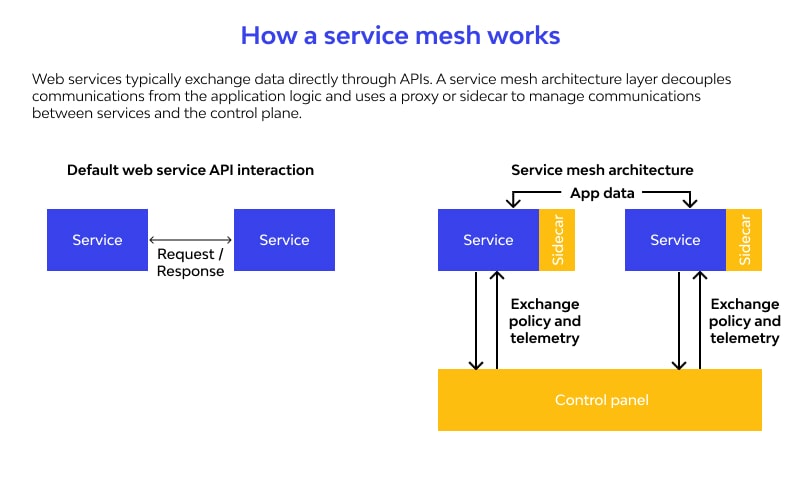

This inventive technology doesn’t do anything different. Rather, it does things differently. In every application architecture, there should be certain rules to decide how, when, and which requests should travel from one communication point to another. In most cases, these rules are employed on the application level.

It fetches these predefined rules from application layers and places them on the infrastructure layer. Now, let’s understand how service mesh makes this happen.

As told theretofore in this article, service mesh features a collection of network proxies. Assuming that you're already aware of how proxies work, we move ahead and explain how it operates.

When service mesh is applied, all the calls by microservices are channeled to their proximate infrastructure layer, making the proxies handle it for the network. These proxies are called the sidecar for the microservice service mesh, because they are supposed to run parallel for the mesh.

Is Service Mesh Really Essential for you?

Well, as application security has become the topmost concern for developers, service meshes are likely to become a mainstream technology. However, that’s not the only issue that it fixes. You might need its assistance to make things better in various other aspects as well.

Bring it into action when you need to run a microservice in multiple clusters as it permits developers to make the mesh responsible for communication, freeing services from this obligation. This way, seamless and straightforward communication can be carried out, even during the presence of multiple clusters running scenario.

If you need to implement multiple policies on a single application, service mesh is a useful pick. The solution lets the developer decide whether policies should be implanted at a granular level or whole mesh level.

Applications demanding the implementation of robust rollout strategies can be highly benefited from it. It assists hugely in the implementation of a blue/green robust rollout strategy.

Advantages and disadvantages of service mesh

Just like anything, the service mesh is a mixed bag of good and bad things, and knowing both sides is crucial before actually bringing this technology into action.

Some of the key drawbacks of this technology are as given next.

- Developers seeking seamless inter-service communication for microservices and containers will benefit hugely from this technology.

- As it enabled infrastructure layer based “talks”, finding errors in the communication process is more convenient.

- Sidecar placement makes network service management easier than before.

- Features like high-end encryption (or end-to-end encryption), user or API authentication, and API authorization can be a part of the application without much fuss. All these features and their implementation ensures better API security.

While all these benefits are highly notable, we can’t ignore the notorious drawbacks of service mesh which are:

- The runtime instance is likely to be increased.

- It increases the time spent in communication due to extra steps involved. Upon service mesh usage, every service call has to pass through the sidecar.

- It makes the integration abilities of the system or service very limited as it hardly supports any other integration.

- If you want effective addressing of routing type or transformation mapping issues then service mesh usage is not advisable.

The future of the Service Mesh

As the demand for microservices and focus on better application security is likely to grow with time, service mesh seems to have a bright future. The recent launch of Istio 1.0 caused much stir in the developer community and is considered a significant improvement in the service mesh architecture.

With time, service mesh will become inevitable for secure microservices development.

The Service Mesh and Zero-trust Security Connection

In today’s times, zero-trust is an approvingly advised security approach for cloud and other applications. By keeping everyone and everything under the radar, this approach upkeeps its promise of amplified security deployments at app as well as infrastructural level.

The use of continual communication/access authentication along with verification is the most viable approach used to ensure it. As both these elements, zero-trust security as well as the service mesh, aim to improve the application security, they are likely to get along well.

Here is how service mesh assists the cybersecurity implementations required for creating zero-trust ecosystem:

- It supports the Mutual Transport Layer Security (mTLS) implementation for secured microservices communication.

- It takes care of microservice identity and authorization management

- Its activation supports access control management implementation

- Service mesh Kubernetes authorizes developers to initiate the least privileged criteria for roles and microservices with the help of RBAC

- Its implementation keeps the application safe from attacks like packet sniffing, data exfiltration, and service impersonation.

Service Mesh Security with Wallarm

Wallarm is one of the most authorized and steadfast ways to bring service mesh into action and conduct viable management. Whether you want to use Istio or are impressed with Envoy, Wallarm will help you in effective implementation while having constant attention to security and seamlessness.

Our API and service mesh security related solutions are of top-notch quality, automated to the extent that you need, and feature-rich. So, begin your API security platform journey with us and have ultimate peace of mind.

FAQ

References

Subscribe for the latest news