What is Serverless Architecture?

Introduction

While innovation and upgrades are happening around us, how can the underlying architecture of digital solutions and applications remain unaffected and unaffected. So, a moderately new approach here to unburden the shoulders of administrators. In fact, it is the most delinquent innovation that has made application development easier than ever before.

Not sure what it is and why the developer community is in all praise for it? Unfold other hidden aspects of this cutting-edge technological innovation the next.

Intro: Defining Serverless Architecture

Exploding onto the tech scene, the serverless structure is forcing even the most entrenched to take notice. This model in the realm of cloud computing redistributes responsibilities by entrusting server orchestration and provisioning to the cloud service provider. The allure of serverless technologies is their modus operandi; they function within evanescent computational containers that are stateless and react to events. The cloud service provider exercises total control, often restricted to a single usage instance.

A Closer Look at 'Serverless'

"Serverless" is ironically named – it doesn’t imply a void of servers. It is simply a reprieve given to software engineers. By removing the burden of server maintenance, capacity decisions, and scaling worries, the cloud service provider elevates the developers' focus which can now be aimed at honing the app’s logic, leveraging productivity.

The Evolution of Serverless Architecture

The genesis of serverless anatomy isn't something that just materialized. It's a trajectory harking back to the conventional server-centric architecture, navigating the virtualization era, and finally taking full flight in the epochs of cloud computing. What sets serverless apart from its forbears is its abstraction of servers. Unlike older models requiring developers to manually set up, customize, and govern servers, serverless presents a hands-off approach, delegated to the cloud service provider.

Unraveling Serverless Architecture

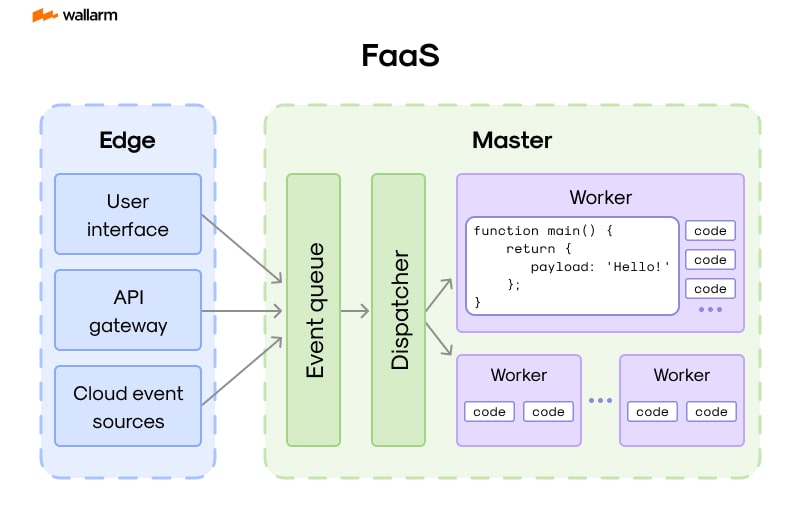

'Functions as a Service' (FaaS) serves as the bedrock for the serverless framework. Employing this strategy, developers envision and initiate code within functions, which are small, stateless bits of independent code. They are activated as a response to various events, be it HTTP requests, database operations, queue services, uploads, or other app-specific actions.

A serverless system can be envisaged as a mesh of these functions, each playing a unique role. The ability to scale these functions individually lends unmatched adaptability and power, driving impactful performance.

The shift from legacy systems to serverless may be understood via the following comparison:

| Legacy Architectures | Serverless Blueprint |

|---|---|

| Manual server control | Independent server management |

| Hefty monolithic apps | modularity via microservices |

| Restricted scalability | boundless scalability |

| Heavy operational costs | pay-as-you-go pricing |

| Extended deployment timeline | swift, hassle-free launches |

In conclusion, the introduction of serverless architecture has reshaped the application development and delivery terrain, yielding benefits such as lower operating costs, enhanced scalability, and quicker market launches. That said, no technology is without its drawbacks and limitations, which will be explored in upcoming sections.

The Underlying Technology: FaaS

In today's rapidly advancing landscape of event-driven programming, it's essential to shed light on Serverless opportunities by delving into the keystone of Function as a Service (FaaS). This pivotal service encapsulates the quintessential merits of serverless computing, reshaping how tech experts architect and launch online solutions.

Decoding FaaS

FaaS, an abbreviated term for Function as a Service, represents the forefront of digital service enabling platforms. It arms coders with the capability to initiate parts of their coded modules (referred to as functions) in response to specific occurrences. FaaS's distinctiveness lies in its ability to liberate coders from the convoluted web of challenging infrastructure, thus dispelling worries concerning server management or configuration of runtime environments. The sole focus remains on crafting excellent source codes, while the FaaS provider takes charge of proficient platform administration.

Venturing further into the FaaS domain, software applications are dissected into disparate functions, each adept at accomplishing unique tasks. These encoded sections are stateless - they retain no recollection of past interfaces. A function's activation could be sparked by anything from an end-user engaging with an online portal to the upload of a document onto a cloud storage system.

Fusion of FaaS and Serverless Structure

The establishment of a serverless framework owes heavily to FaaS. Contrary to popular opinion, the term "serverless" doesn't deny the existence of servers. Instead, it camouflages the operational complexities of servers, delivering a smooth platform for code experts. It falls under the jurisdiction of the FaaS provider to refine server operations, assure persistence and automate scalability according to increasing necessities.

This in-depth management of infrastructure grants coders the luxury to focus entirely on composing flawless codes without the burden of managerial issues. They just need to script a function and confide it to a FaaS platform. The remaining tasks are managed by the FaaS system.

Core Traits of FaaS:

- Response to Events: The functions are dormant, awaiting a triggering event to trigger their functionality. An event might be any alteration in a database or an API request or a dynamic user interaction on a webpage.

- Statelessness: Each function execution is autonomous without preserving previous usage data, thus enabling seamless scaling because all functions operate concurrently.

- Brief Operations: FaaS functions spring to life momentarily to address an event and then cease their role.

- Controlled Infrastructure: Handling complex infrastructural needs, such as server setup, upkeep, and system scaling, falls within the obligations of the FaaS provider.

- Usage-related Billing: FaaS ushers in the financial advantage of paying only for the duration of use, with measurement often done in milliseconds, making it incredibly cost-sensitive.

FaaS Utility: A Quick Look

Let's take an example of a web application that provides an image upload feature and needs to create a thumbnail for every image uploaded.

Traditional methods would demand a server to be constantly ready, anticipating potential uploads. FaaS advanced this norm - the same operation can be executed by drafting a function to generate thumbnails and host it in a FaaS environment. This function is launched each time a user uploads an image, forms the necessary thumbnail and then concludes. The price incurred is solely for the time utilized in thumbnail production, breaking away from the costs of 24/7 server availability.

In summation, FaaS stands as a crucial cog in the serverless architecture mechanism, presenting coders with an uncluttered space to refine their coding crafts. This dual mechanism shifts the coder's engagement from managing infrastructure to developing high-quality codes, leading to increased productivity, minimal managerial struggle, and potential cost efficiency.

Exploring the Benefits of Serverless Architecture

As we explore the realm of software production, one cannot overlook the surge of a seemingly omnipresent feature – serverless technology. This article aims to demystify serverless technology, highlighting its manifold benefits that have earned it an integral place within countless enterprises, and becoming the preferred tool for an array of software developers.

Seamless Expansion

Facilitating scalability is an innate virtue of serverless technology, making it superior to predecessor, server-dependent applications. Old-style systems mandated human intervention to regulate their size, aligning with fluctuating application demands – a labor-intensive procedure that often led to resource misspend or shortage.

Contrarily, serverless technology encompasses the capacity to expand organically, seamlessly adjusting to the circumstantial workload. Irrespective of the volume of requests, from a mere trickle to a torrent, the serverless platform evolves in real-time, delivering prime performance, sans the necessity of human oversight.

Economical Operation

With an consumption-based pricing structure, serverless technology charges its users solely for the utilized computing time. No activity translates to no billing, which starkly differs from legacy server-dependent models that levy charges irrespective of resource occupancy. Owing to its cost efficiency, serverless technology emerges as a favorite for applications grappling with intermittent workloads.

Productivity Increment

The adoption of serverless technology untethers developers from the rigors of server monitoring and upkeep, paving the way for a concentrated focus on code formulation and feature innovation. This enhanced productivity leads to expedited and amplified code deployments. Serverless technology simplifies the complexities of software creation, empowering even the novices to make a valuable contribution towards the project objective.

Real-time Event Responsiveness

Operating on an event-driven model, serverless technology is capable of real-time response, making it a standout choice for applications necessitating live information handling, such as IoT contraptions, instantaneous data analytics, and mobile applications. With tailored responsiveness to particular events, the serverless platform prompts functions, facilitating a dynamic and engaging user experience.

Latency Reduction

Serverless technology optimizes latency by executing code proximate to the user, outdoing traditional dependency on a central server, which might be remotely situated, intensifying latency. Serverless service providers, like AWS Lambda and Google Cloud Functions, distribute code across diverse geographical locations, ensuring proximate code execution, thereby curtailing latency.

Unwavering and Dependable

Serverless technology, with its inbuilt fall-back mechanism, rarely falters. In the infrequent event of unresponsiveness, serverless technology can retry a function autonomously, guaranteeing an uninterrupted and smooth user experience. This kind of resilience is demanding to accomplish with conventional server-centric systems.

In conclusion, serverless technology, replete with attributes such as seamless expansion, consumption-based pricing, productivity enhancement, real-time event responsiveness, latency optimization, and dependability, has emerged as the ideal choice for enterprises and developers looking to invent contemporary, scalable, and economical applications.

Dissecting the Downsides of Serverless Architecture

While serverless architecture has been hailed as a game-changer in the world of software development, it is not without its drawbacks. This chapter will delve into some of the key challenges and limitations associated with serverless architecture, providing a balanced perspective on this innovative technology.

Cold Start Issue

One of the most commonly cited downsides of serverless architecture is the "cold start" issue. This refers to the delay that occurs when a function is invoked after being idle for a certain period. The delay is caused by the time it takes for the cloud provider to allocate resources to the function. This can be a significant issue for applications that require real-time responses, as it can lead to noticeable latency.

Vendor Lock-In

Serverless architecture is heavily dependent on third-party vendors. This means that once you choose a specific provider, such as AWS Lambda or Google Cloud Functions, it can be difficult to switch to another provider without significant changes to your codebase. This dependency can limit flexibility and potentially lead to higher costs if the vendor decides to increase their prices.

Limited Customization

Serverless architecture offers less control over the environment compared to traditional server-based solutions. This can limit the ability to customize the system to meet specific needs. For instance, you may not be able to use a specific programming language or a particular version of a language. Additionally, you may have less control over security and privacy settings.

Debugging and Monitoring Challenges

Debugging and monitoring serverless applications can be more complex compared to traditional applications. This is because serverless applications are distributed and event-driven, making it harder to trace errors and monitor performance. While there are tools available to help with this, they often require additional setup and configuration.

Timeouts and Memory Limits

Serverless functions have strict limits on execution time and memory usage. For example, AWS Lambda functions have a maximum execution time of 15 minutes and a maximum memory allocation of 3008 MB. If your function exceeds these limits, it will be terminated, potentially leading to incomplete processing.

Cost Predictability

While serverless architecture can lead to cost savings due to its pay-per-use model, it can also make costs less predictable. If your application experiences a sudden surge in usage, your costs could skyrocket. This can make budgeting more challenging compared to traditional server-based solutions where costs are more predictable.

In conclusion, while serverless architecture offers numerous benefits, it also has its share of challenges. It's important to weigh these downsides against the potential advantages before deciding whether serverless is the right choice for your project. In the next chapter, we will compare serverless architecture to traditional architecture to provide a more comprehensive understanding of these two approaches.

Comparing Serverless Architecture to Traditional Architecture

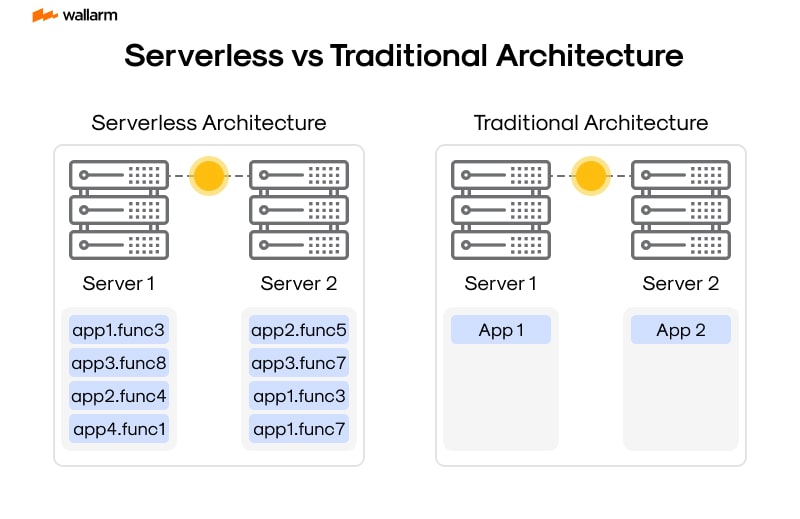

Computing systems heavily rely on the efficient design of their frameworks for optimal functionality and scope for growth. In this section, we will dissect two main computing blueprints: cloud-native structures and conventional models, discussing their distinct features, merits, and limitations.

Conventional Models Explained

More commonly referred to as on-site systems or server-dependent models, conventional architectures operate applications on servers directly owned, supervised, and operated by the corresponding organization. This framework necessitates a substantial allotment of hardware as well as software resources, accompanied by a devoted IT squad to supervise the machinery.

We can classify the conventional model into two factions: unified structures and compartmentalized services. In unified structures, each part of the application directly relies on and affects the other parts. In contrast, compartmentalized services split the application into tiny, autonomous services. Each of these can be fabricated, launched, and enlarged independently, granting them a substantial degree of autonomy and flexibility.

Overview of Cloud-Native Structures

In stark contrast to its counterpart, the cloud-native structure enables cloud services to manage the servers. This absolves developers of infrastructure concerns, allowing them to exclusively concentrate on coding, with the cloud provider overseeing the framework, scalability, and server administration. This model operates on the principle of Function-as-a-Service (FaaS), which deconstructs applications into standalone functions, capable of individual invocation and expansion.

Distinguishing Aspects Between the Two Models

Hardware Supervision

Conventional models hold organizations accountable for managing and maintaining their servers. This often proves to be painstaking and expensive. Cloud-native structures, however, delegate all infrastructure responsibilities to the cloud provider, including administrative tasks such as server upkeep, scaling, and capacity prediction.

Elasticity

Cloud-native structures are recognized for their automatic elasticity, where the cloud provider provides additional computational resources on demand, retracting them when not needed. Conversely, conventional models demand a manual scaling process, hindering flexibility and responsiveness to fluctuating needs.

Expenditure

While conventional models charge organizations for the servers and their upkeep regardless of usage, cloud-native structures follow a pay-as-you-use plan. Therefore, the organization only pays for the computation time used. This method is particularly economical for organizations with volatile or unforeseeable workloads.

Speed of Development

Cloud-native structures enable a faster development and deployment cycle by allowing builders to exclusively focus on the code. On the contrary, in conventional models, developers often invest considerable time in server-related tasks, postponing the development procedure.

Comparative Examination

| Element | Conventional Models | Cloud-Native Structures |

|---|---|---|

| Hardware Supervision | Organization's responsibility | Cloud provider’s role |

| Elasticity | Requires manual input, lacks flexibility | Automatic, extremely flexible |

| Expenditure | Fixed, based on server capacity | Fluctuating, based on usage |

| Speed of Development | Protracted by server-related duties | Swift, as focus is solely on coding |

Deciding on the Suitable Model

Choosing between cloud-native structures and conventional models is heavily influenced by an organization's specific requirements and limitations. If an organization possesses a sizable IT staff and focuses on complete server control, conventional models may be more suitable. However, for those looking to minimize infrastructure expenditures, enhance elasticity, and accelerate development, cloud-native structures could present a very favorable option.

In the following section, we will delve deeper into the integral components of cloud-native structures, offering a thorough comprehension of their operation and the resulting benefits to your organization.

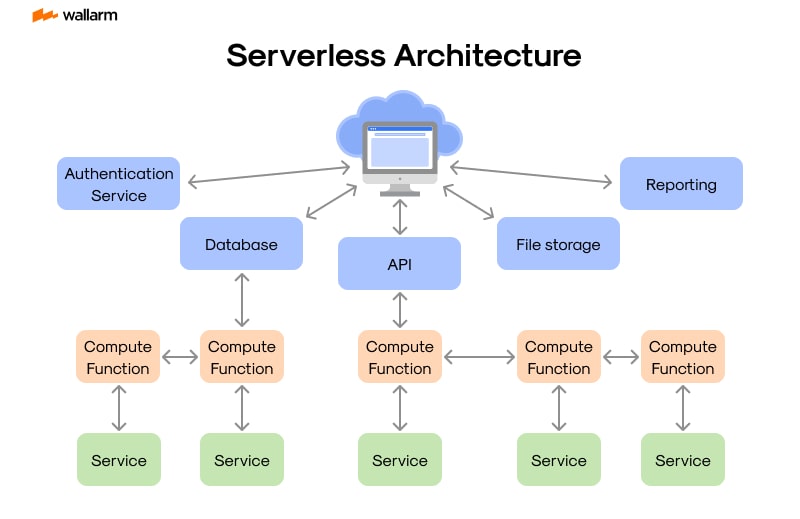

Essential Components of Serverless Architecture

Serverless technology navigates on an intricate outline, harmonizing multiple imperative sectors. Unraveling these sectors' mechanisms grants the leverage to tap into the myriad of benefits housed within this platform.

FaaS: An Essential Component

A key piece residing in the serverless paradigm is the Function as a Service (FaaS), also known as the Functional Navigator, instrumental in orchestrating distributed computing. FaaS enables software engineers to activate specific codes or tasks, depending on definite triggers.

The main allure of FaaS is its ability to conceal the base infrastructure, thereby allowing developers to singularly focus on coding and deployment stages. Routine tasks such as booting the primary framework, setting data limits, and carrying out iterative software updates solely fall within the purview of the backend service provider, thus liberating developers to engineer code-oriented tasks that fuel business enlargement.

Action Stimulators

In the serverless universe, Action Stimulators are seeds sowing the initiation of specific functions or operation executions. These catalysts could be user engagement with web components, new data entries into the storage module, or fresh notifications in a communication platform.

When an Action Stimulator dispatches its job, the FaaS promptly jumps to action. Processes come to a standstill once the task wraps up, highlighting the event-dependent attribute of the serverless methodology.

Interaction Tunnels

Unfailing interaction with allied services like data reserves, storage divisions, or other APIs is a must for ensuring slick operations in serverless technology. This vital communication is maintained through APIs and SDKs, acquired from either the backend service provisioner or other vendors, marking the importance of intercommunication in upkeeping the efficiency and flawless progression of the serverless design.

Efficient Scaling and Round-the-clock Availability

Beyond core advantages, the serverless architecture is lauded for its efficient scalability capabilities and its 24/7 operational mode. The synergy between FaaS and Action Stimulators eliminates any need for developer interference, even when multiple operations run concurrently.

In a serverless blueprint, continuous service availability and instant activation upon detecting a trigger are unwavering commitments. This trustworthy performance and continuous preparedness are reinforced by inbuilt protection strategies and backup plans.

Security Measures

The safeguarding layout in serverless tech covers multiple dimensions. The back-end service provider shields the core framework, including servers, connectors, and data caching gadgets, leaving code and data security within the developer's jurisdiction.

The application of security audits often demands specialized tactics, such as controlling access and identity privileges, scrutinizing code exports, and ciphering sensitive data. Providers commonly offer tailored resources and services to aid developers in fortifying their information security measures.

Taking stock, the competency and agility of a well-designed serverless architecture emanate from the combination of these robust components—FaaS: An Essential Component, Action Stimulators, Interaction Tunnels, Efficient Scaling and Round-the-clock Availability, and Security Measures. A deep-rooted comprehension of these facets can unlock the formidable abilities within such a system.

Deep Dive: Lambda, The Heart of AWS Serverless

Harnessing the might of non-server systems via Amazon Web Services' Lambda

The significant impact Amazon brings to the realm of non-server systems is epitomized by AWS Lambda. It stands apart from its non-server counterparts with its capacity to relieve IT professionals from key server management issues, crafting a niche space for script deployment.

Distinguishing Characteristics of AWS Lambda

Consider AWS Lambda as your digital butler, liberating IT crews from the constraints of routine server upkeep and standard procedures. Lambda self-propels based on coding directives, adapting to deviations in request volume and load. Its unique billing model, allowing users to pay only for duration of processing, bypassing idle periods, underscores its distinctiveness.

The Custom Operational Procedure of AWS Lambda

AWS Lambda's mode of operation is greatly event-orientated. It springs into action at the detection of distinct incidents including user-web engagements, data modifications, and data transfer within an adaptable environment. After addressing a particular demand, it reverts to standby, posturing for following incidents.

The Adaptive Nature of AWS Lambda

Due to its responsive nature, AWS Lambda is primed to respond to designated incidents instantaneously. Potential inciters could vary from commands via Amazon API Gateway, data modifications stored in an Amazon S3 bucket, variations in the Amazon DynamoDB database or transitions in AWS Step Functions.

Upon activation, AWS Lambda swiftly oversees server control, system specifics, resource allotment, automatic-adjustment checks, coding execution supervision, and record management. Implementation of codes should be in harmony with the languages supported by Lambda.

The Effect of AWS Lambda on Non-Server Architecture

AWS Lambda has revolutionised conventional structure maintenance by shaping non-server patterns. This amenity enables coders to focus solely on scripting, allowing them to avoid complex and intricate server and infrastructure tasks.

Regular server-dependent applications augments the demand for balancing loads, ensuring security, and considering uptime for developers. These are tasks that AWS Lambda ably performs for Amazon, facilitating developers to shift their focus to coding.

Comparison Between AWS Lambda and Traditional Server Layouts

| AWS Lambda | Conventional Server-Based Models |

|---|---|

| Eliminates server upkeep | Enforces server management |

| Offers automatic resource moderation | Requires manual power moderation |

| Bills strictly for computation time | Charges for constant availability |

| Functions based on event triggers | Operates with/without incident occurrence |

| No data memory between procedures | Maintains state during functions |

Advantages Offered by AWS Lambda

- Autonomous Server Management: AWS Lambda basically frees you from the incessant need for server administration, operating based on your instructions.

- Assured Real-Time Scalability: AWS Lambda adapts your application's interaction depending on inbound traffic trends.

- Calculation-Pricing Model: Charges only for the precise millisecond when your script is engaged including code executions.

- Comprehensive Security Measures: AWS Lambda standardly runs the script encompassed by a Virtual Private Cloud (VPC), following your guidelines.

- Inclusive Development Environment: AWS Cloud9 presents as a contemporary platform for initiating, implementing, and debugging Lambda procedures.

Practical Example: Python Script Delivery via AWS Lambda

Here is a demonstration of a Python script executed through AWS Lambda:

def lambda_implementation(event, context):

print("Warm greetings, from AWS Lambda!")

return "Warm greetings, from AWS Lambda!"

When sparked, the script duplicates and returns the message - "Warm greetings, from AWS Lambda!"

The upshot is that AWS Lambda will invigorate traditional non-server computing norms. It delegates traditional server and infrastructure management tasks to developers, prompting them to primarily focus on scripting. When used wisely, AWS Lambda is an effective tool for businesses, granting a flexible method to develop robust, adaptable, and cost-efficient software applications.

Case Study: Success Stories with Serverless Architecture

In the ensuing discourse, we will delve into authentic case studies where companies have reaped significant benefits by incorporating serverless technology into their operations. We aim to showcase these real-life scenarios as pertinent illustrations of the theoretical concepts we've touched upon earlier.

Case Study 1: The Transformation at Coca-Cola

Coca-Cola, an iconic global brand with an expansive range of drinks, led the way in embracing serverless technology. A particular point of interest was their deployment of AWS Lambda to govern their worldwide network of vending machines. Prior to this pivotal change, the burden of maintaining servers to accumulate, process, and manage data from these machines was colossal.

Upon integrating AWS Lambda, Coca-Cola witnessed considerable cost savings since they only had to cover costs for the time used for computation, drastically slashing the expenses related to server maintenance. Additionally, the serverless technology equipped Coca-Cola to scale their operations seamlessly, ensuring they could collect and manage data from numerous vending machines with utmost efficiency.

Case Study 2: Netflix's Serverless Journey

Netflix, a dominant player in the digital streaming arena, adopted serverless technology to provide superior streaming services to its extensive pool of users. Specifically, AWS Lambda bolstered their encoding operations, a critical aspect of delivering high-quality video content.

Netflix's move to serverless technology meant detangling themselves from a cumbersome infrastructure that hindered effective encoding operations and scalability. The shift to AWS Lambda granted Netflix the freedom from this onus, resulting in a notable reduction in operation costs and improved scalability.

Case Study 3: Autodesk's Serverless Advantage

Shifting our focus to Autodesk, a frontrunner in 3D design, engineering, and entertainment software, the company harnessed serverless technology to revolutionize its licensing approach. Through AWS Lambda, Autodesk introduced a pay-per-use licensing model enabling clients to pay for services they actually used.

This strategic pivot to serverless technology played a significant part in curbing Autodesk's operational costs. Beyond improving their bottom line, this new model also elevated the user experience, offering flexible, user-friendly licensing options.

Case Study 4: iRobot's Serverless Transformation

iRobot, the company behind the popular Roomba vacuum cleaners, leveraged serverless technology to manage an enormous volume of data sourced from millions of devices. AWS Lambda and other serverless services were deployed to process and analyze this data, yielding vital insights about users' habits and device performance.

Before adopting serverless technology, iRobot was grappling with a cumbersome data-handling infrastructure. The shift to serverless technology enabled them to reduce operational costs, increase scalability, and focus more on high-quality product development and delivery.

These case studies collectively demonstrate the transformative impact of serverless technology. It presents a myriad of benefits, from cutting down operational costs, enhancing scalability, to driving innovation in business models. However, the successful incorporation of serverless architecture calls for careful planning and precise execution to harness its full potential.

Transitioning to Serverless: A Step-by-Step Guide

Transitioning to the serverless landscape is a task that calls for careful deliberation, profound technical prowess, and a strategic course of action. Here is a unique guide to ease your step-by-step transition into the serverless realm.

Stage 1: Deep Dive into Your Current Setup

Transitioning to a benchless environment demands a profound understanding of your organization's present infrastructure. This includes being well-versed with the technology stack in place, the blueprint of your applications, and data flow dynamics. A meticulous scrutiny of the existing architecture will help in identifying and eliminating weak spots or areas that require attention.

Stage 2: Identify Applications that Align with the Serverless Framework

Serverless is not a one-size-fits-all model. Applications that are event-driven, state-independent, and exhibit latency tolerance work well within a serverless framework. However, applications that consistently run processes, maintain a stable state, or require low latency might not be suitable candidates.

Stage 3: Choose a Serverless Provider

The market offers a variety of serverless providers, each having its own set of pros and cons. Notable providers include Amazon with AWS Lambda, Google's Cloud Functions, and Microsoft's Azure Functions. Your selection should take into account factors like cost efficiency, scalability, performance properties, and the overall ecosystem of the provider.

Stage 4: Design Your Benchless Blueprint

Following the provider selection, creating the architecture of your serverless model becomes the next priority. This involves designating the triggers for your functions, establishing their interconnectivity, and strategizing ways to manage the state. Key considerations like error handling, logging practices and monitoring need to be factored in.

Stage 5: Construct Benchless Functions

Once architectural plans are in place, it's time to put your serverless functions together. This includes writing your code, setting event triggers, and generating any crucial resources. Rigorous testing of the functions to ensure they work as planned is fundamental in this stage.

Stage 6: Roll-out Your Benchless Design

Upon successful creation and validation of functions, you can commence the deployment of your serverless design. This involves porting your functions to your chosen serverless provider, setting up essential infrastructure, and tailoring your functions to efficiently operate on the cloud.

Stage 7: Monitor and Refine Your Benchless Design

Post-deployment, it's imperative to regulate your serverless architecture's performance continually. Configuring tools for monitoring and logging, assessing performance indicators, and refining your functions for better performance and cost-effectiveness should top your list of priorities.

Stage 8: Progress and Refine

A switch to serverless is not a one-off process. It's crucial to continually fine-tune and upgrade your serverless architecture in line with changing requirements, emerging technologies, and evolving industry standards.

In conclusion, the shift to serverless, while complex, demands thorough planning and execution. Done correctly, it can lead to significant improvements in scalability, cost efficiency, and augment the efficacy of a developer's workflow.

Considering Cost: The Financial Aspect of Going Serverless

As companies weigh the option of migrating to a serverless structure, they must closely examine the cost implications. Being aware of associated expenses is vital for a well-rounded decision-making process concerning the shift to serverless.

Understanding the Pay-per-Use Model

One key advantage of serverless structure that serves as a compelling factor for businesses is the attractiveness of the pay-per-use pricing scheme. In stark contrast to conventional server environments where users prepay for server space irrespective of its utilization, serverless design charges businesses based on the specific computation time used by applications. Hence, the absence of usage leaves you incurring zero charges.

To exemplify, let's take a conventional server that costs $100 per month. Even if your application is operational for 1 hour or 24 hours a day, it attracts the same charges. However, with serverless framework, using your app for 1 hour a day means you only incur expenses for that hour. This unique feature can lead to notable savings, particularly for applications experiencing fluctuating or unpredictable web traffic.

Evaluating the Expense of Scaling

Scaling to accommodate increased web traffic can prove expensive in traditional server setups. It often necessitates buying more servers, which consequently adds to hardware costs and increases the financial burden tied with installation and upkeep.

In complete opposition to this, serverless design instinctively scales to match demand. In case of a surge in application traffic, the serverless platform is more than capable of instantly allocating supplementary resources to manage the surge. The automatic scaling feature removes the need for manual interference and lowers the risk of downtime due to overloading.

Assessing Operational Expenditures

A serverless design can cut operational expenditures considerably. Conventional servers mandate frequent maintenance, which includes software upgrades, security enhancements, and hardware repairs, all of which require specialized skills and time, thereby elevating overall running costs.

The shift to a serverless paradigm means the service provider takes care of all maintenance tasks and activities. This not only trims operational costs but also frees your team to focus on refining and augmenting your application without the burden of server management.

Identifying Hidden Expenditures

Despite potential cost-saving benefits, it’s crucial businesses stay vigilant about potential hidden expenditures tied to serverless architecture:

- Data transfer expenses: While computation time is the major cost driver in serverless design, expenses tied to data transfer could also rise, particularly for applications managing huge data volumes.

- Refactoring cost: If you're shifting from a conventional server setup, you might need to restructure your application to function within a serverless framework. This process could demand ample time and resources.

- Training costs: Your team may need to acquire new skills and familiarize themselves with tools to work with a serverless structure, which could lead to training expenses and productivity dips during the transition.

Cost Comparison

| Cost Element | Traditional Server | Serverless Framework |

|---|---|---|

| Server Expense | Fixed monthly charge | Pay-per-use |

| Scaling Expense | High (manual scaling) | Low (automatic scaling) |

| Operational Expense | High (routine maintenance) | Low (handled by provider) |

| Hidden Expenditures | Low | Potential for high costs (data transfer, refactoring, training) |

In summary, the move to a serverless framework can potentially offer substantial cost advantages. However, it’s crucial to fully assess potential costs. An in-depth understanding of the financial outcomes aids in making a well-rounded decision about the suitability of a serverless framework for your business.

Security Concerns in Serverless Architecture

Assessing Protection Protocols in Decentralized Architectures

The advent of advanced serverless mechanisms provides a novel take on server-driven resolutions, amplifying safety possibilities beyond standard restrictions. Still, it's fallacious to imagine that these technological advancements do not carry inherent security risks.

In the realm of serverless models, the fundamental duty to safeguard the foundational infrastructure lies with the cloud services supplier, tasked with ensuring the secure and steady operation of processing units, networking channels, and operating system applications. The user, on the other hand, manages the application aspect, known to be a hotbed of security complications in serverless setups.

Universal Security Dilemmas in Serverless Structures

- Single-function Security Breaches: Each function within a serverless framework may serve as a possible opening for digital infringements. If these functions lack proper security measures, they may be exploited to enable unwanted intrusion or disturbances.

- Deficient Logging and Tracking Capabilities: Keeping a transparent historical record in the scattered serverless platform can be challenging, especially when immediate identification and response to security breaches are vital.

- Reliance on External Components: Serverless structures typically rely on external applications and services. Inattentive oversight of these ancillary components can lead to novel security lacunas.

- Haphazard Configuration Protocols in Decentralized Settings:Careless configuration can leave serverless applications exposed to potential hazards. This often originates from insufficient access control and unsecured API touchpoints.

- Information Revelation: Data flow within serverless layouts could inadvertently be revealed when it’s in motion or stationary. This might be due to unproductive data storage systems, missing encryption, or inadequate access control.

Suppressing Security Threats in Serverless Structures

Mitigating these concerns requires the incorporation of feasible safety measures, employment of useful tools, and an evolving mindfulness towards security.

- Function-dedicated Safeguards: Integrate solid attributes to safeguard each discrete function in your serverless structure. This stipulates rigorous data entry verification, effective error regulation tactics, and confinement of capabilities for each component.

- Stepped-up Supervision and Logging: Institute an advanced scheme to oversee operations and catalog records for prompt identification and modulation of security contraventions. Monitor function executions, API dialogues, and abnormal activities.

- Routine Review of External Enhancements: Establish regular vetting of auxiliary components to expose potential weak links. Using tools that auto-identify known vulnerabilities can greatly improve security control.

- Utilize Comprehensive Configuration Procedures: Adhere to tried-and-true guidelines while assembling your decentralized environments. Enforce strict access governance, fortify your API touchpoints, and consistently encrypt sensitive data.

- Bolstering Data Protection: Deploy sophisticated encryption methods for both in-transit and at-rest data. Implementing a productive system to supervise encryption keys is essential for defending information.

In a nutshell, although decentralized technology sidesteps certain common safety hurdles, it brings forth novel challenges at the application level. Awareness of these emerging challenges and the proactive implementation of robust security capacities enhance the stability and safety of the serverless application.

Understanding the Serverless Ecosystem

Serverless technology isn't a fleeting fad, instead, it represents a considerable advancement in the realm of software construction. It meticulously designs intricate webs of services, tailoring each software approach, and specific platform. This serverless tech acts as both a springboard and an incubator for state-of-the-art applications, explicitly crafted for select serverless frameworks.

Pillars of the Serverless Principle

Lambda from Amazon, Google's suite of Cloud Solutions, and Microsoft's Azure Functions play the role of supermassive forces in the arena of serverless platforms. These tech behemoths shoulder the task of server management, offering developers the opportunity to sharpen their coding abilities.

In addition, serverless philosophies present a gamut of tools wielded at diverse stages of a serverless expedition. Key components amongst these include:

- Serverless Innovation Tools: Assisting coders in designing, scrutinizing, and refining their serverless trajectory. Hallmarks of these tools include Serverless Framework, Amazon's SAM, and Google Cloud SDK.

- Implementation Approaches: These stimulate the commencement of serverless applications on the apt platform. Major approaches include Amazon's CodeDeploy, Google Cloud's Deployment Manager, and Microsoft’s Azure DevOps.

- Performance Analysing Systems: These interpret serverless performance metrics. Systems like Amazon CloudWatch, Google Stackdriver, and Microsoft's Azure Monitor play a crucial role.

- Security Measures: These safeguard serverless applications at their core. Measures such as Amazon IAM, Google Cloud's IAM, and Microsoft's Azure's Active Directory address serverless security predicaments.

Autonomous Services Boosting Serverless Capacities

Exclusive solutions can pilot serverless applications into unexplored territories by recommending:

- Database Services: Amazon's DynamoDB, Google Cloud Firestore, and Microsoft's Azure Cosmos DB are practical tools offering flexible, tailor-made storage designs honed for serverless applications.

- API Creation: Significant apparatuses enhancing interactions with external APIs. Top contenders here are Amazon's API Gateway, Google Cloud Endpoints, and Microsoft's Azure API management.

- Messaging and Event Protocols: These components dictate the conversation pathways between serverless protocols and additional application elements. Leading entities in this respect are Amazon SNS, Google Cloud Pub/Sub, and Microsoft's Azure Event Grid.

- AI and Machine Learning Integration: Elements that enable serverless applications to effortlessly blend AI and machine learning capabilities. Pioneers here are Amazon's SageMaker, Google Cloud AI Platform, and Microsoft's Azure Machine Learning.

The Strength of Serverless Infrastructure

A robust serverless environment ensures impeccable connections among various serverless platforms, backed by a compilation of resources to nurture, safeguard, and amplify serverless applications. Without these tools, there might be a failing to exploit fundamental serverless attributes such as streamlined functions, heightened scalability, and swift market response.

Moreover, an active serverless sphere visualizes a universe abundant with possibilities. It continually forges fresh solutions and apps competent at managing specific needs within a serverless schema. This dynamic setting stokes innovation, captivating both developers and industries.

In conclusion, gaining mastery over serverless infrastructure knowledge is essential to unlock serverless advantages and uncover the immense opportunities tucked within serverless systems. Developers and corporations equipped with a comprehensive understanding of their internal mechanisms and interdependencies can make well-judged choices about tool picks, efficient planning, and operative tactics, thereby adeptly navigating the ever-evolving terrain of serverless tech.

The Future Outlook: Serverless Architecture

A study by MarketsandMarkets indicates a significant surge globally concerning stateless technologies. The report details growth from $7.6 billion in 2020 to an estimated $21.1 billion by 2025, which equates to a Compound Annual Growth Rate (CAGR) of about 22.7%. Driven by various factors, this leap owes much to the pressing demands for advanced computational strategies and the escalating utilization of automatic programs creation and scaled-down services.

An anticipated surge in acceptance and application of this avant-garde tech stands evident across diverse sectors. Healthcare, for example, may find vital use of stateless interfaces in managing health-related details and in real-time patient supervision. In the consumer retail sector, employing stateless capacities could refine online trade handling and improve stock maintaining processes. The financial sector also exhibits promise in enhancing immediate data analytics and trace fraudulent activities.

Trailblazing Developments Steering the Journey of Stateless Architectural Systems

Stateless tech is advancing, and some emerging trends appear set to influence its future trajectories:

- Synergistic Meshing of Machine Learning and Artificial Intelligence: Incorporating ML processes with AI inside the stateless ambit could foster a reciprocity that could lead to uncovering fresh prospects.

- Advent of Periphery Computation: The rapid spread of IoT devices demands information management techniques closer to data generation sites—a requirement distinctly met by the on-demand computation offered by stateless architecture.

- Supreme Developer Experience: To enrich software creators' experiences, the stateless field is witnessing the introduction of advanced debugging tools, seamless operational procedures and in-depth guides.

Potentialities and Difficulties Confronted by Stateless Architectural Systems

Despite the attractive expansion forecasts for stateless tech, it isn't without obstacles:

- Addressing Security Ramifications: While stateless remedies can heighten security by reducing attacked avenues, they could concurrently cause new threats such as disclosure of process event details and susceptibilities tied to reliance on external service renderers.

- The Initialization Hiccup: This issue arises when a hibernating stateless procedure sprouts, triggering stalls. Notwithstanding the solution providers' ongoing diminish efforts, it remains a challenge for applications requiring immediate feedback.

- Managing Convolution: The inherent complexities of the stateless framework, involving various services and routine process eruptions, could trigger difficulties in performance observation and troubleshoot technical kinks.

Despite these barriers, the future looks promising for stateless architectural systems. As technological progress saturates the sector, it sets the stage for inventive applications and answers for stateless establishments. The key to success lies in grasping the potential and limitations of the tech to deploy it effectively in resonance with commercial goals.

Expert Tips: Optimizing Your Serverless Applications

Let's talk about enhancing the productivity and solidity of your serverless applications. Unlock the full potential of this tech with the following expert tips.

1. Prioritize Stateless Mechanics

In a serverless environment, functions are naturally stateless - they don't store data across uses. Embrace this characteristic by designing applications that avoid data retention. Depend on external service providers or databases to keep information, as local or ephemeral data storage solutions lose data after use.

2. Lessen the Impact of Cold Starts

Cold starts can happen if a function is idle for long, leading to increased latency and diminished user satisfaction. Preempt this issue by keeping your functions busy with systematic triggers or exploit services like AWS Lambda's guaranteed concurrency attribute.

3. Control Function Sizes

The size of your function directly impacts its performance. Weighty functions need more ramp-up time and higher memory, impacting costs. Thus, maintain your functions lean and follow the 'one task per function' rule, also known as the 'single duty principle', for more rewarding results.

4. Leverage Connection Pooling

Instituting a new database connection for every function process can be time-consuming and resource-intensive. Utilize connection pooling, the reusable connection method, that's more time and cost-efficient. AWS Lambda strongly recommends this feature for RDS databases.

5. Stay Vigilant: Monitor and Debug your Functions

Systematic tracking and problem-solving are key to enhancing serverless application performance. Leveraging tools like AWS CloudWatch or Google Cloud's Stackdriver for deep insights into function execution, discrepancies and performance metrics can be beneficial. AWS X-Ray, an advanced debugging tool, offers a trail of requests as your system processes them.

6. Exercise Wise Memory Allocation

The memory assigned to your function significantly affects its efficiency. Skimping on memory allocation could cause delays, while allocating too much could lead to overspending. Therefore, judiciously allocate the right memory size in sync with your function's needs.

7. Implement CDN for Unchanging Content

For static content in your serverless architecture, deploy a Content Delivery Network (CDN). CDNs excel in lowering latency and improving the user experience by delivering the static data near to the user.

8. Establish a Strong Error Management Approach

A strong error management strategy is vital for the continuity of your serverless applications. Include retry policies for temporary errors and use dead-letter queues for persistent ones so that no failed requests are lost. They can either be reattempted later or examined for improvement.

9. Stay Ahead With Updated Runtime Version

Keep abreast with your function's runtime version. The most recent versions usually bring improvements that enhance performance and security factors. This preference can be selected when integrating data on various serverless tech platforms.

10. Run Function Tests Regularly

Regular tests measure the robustness and efficiency of your serverless applications. Utilize unit tests, integration tests, and load tests to validate your functions and platforms like AWS SAM Local or the Serverless Framework's 'invoke local' command for local function testing prior to deployment.

These tailored pieces of advice focus on optimizing your serverless application's performance, reliability, and cost-efficiency. Serverless tech can certainly offer great value, given the right approach and expertise.

FAQ: Debunking Common Serverless Myths

Clarification 1: Serverless Does Not Translate to No Servers

A widespread misconception about Serverless technology is the idea that it functions without the influence of any servers. Rather, the name can be misleading. In fact, serverless infrastructures continue to rely on servers for their operation. The distinct characteristic of this technological paradigm is its inherent structure, where the servers' organization, scalability, and upkeep responsibilities rest with the cloud solution supplier, freeing app creators from such tasks.

Clarification 2: Serverless Technology Is Not Restricted to Mere Small-Tasks

It's a commonly held misbelief that serverless technology suits only small tasks or minor projects. In contrast, this mindset is markedly disconnected from reality. While it's true that serverless setups prove perfect for petite, transient operations, they are also well capable of handling more comprehensive and multifaceted applications. Big corporations like Netflix and Coca-Cola have adeptly used serverless technology for significant projects, demonstrating its ability to handle varying degrees of complexity and scale.

Clarification 3: Serverless Isn’t Always Cheap

A common school of thought holds serverless solutions as perpetually cost-efficient compared to traditional server-based setups. There is potential for savings, given its business model operates on usage-centric payment. However, it's not always the most cost-friendly solutions. Various factors, such as the frequency of use, duration of service, and amount of resources consumed, could impact the overall expenditure tied to a serverless setup. Therefore, undertaking a detailed cost study becomes essential when considering migrating to a serverless setup.

Clarification 4: Security Shouldn’t Be A Concern In Serverless Systems

There are common, misplaced fears about the potential vulnerability of serverless systems compared to the old-fashioned server systems concerning cyber attacks. Contrarily, this fear is far from justified. In serverless models, the responsibility of fortifying servers and operating systems rests with the cloud solution providers. This redirecting of duty allows app creators to focus on securing the app's internal structure. Additionally, cloud solution providers typically employ advanced security measures like data encryption and permission control to provide an additional layer of security to applications.

Clarification 5: Serverless Technology Doesn't Equate Unlimited Scalability

Some argue that serverless infrastructures offer endless scalability, but this is a miscalculation. There exist restrictions determining how much a serverless-based app can scale-up, and these limitations are typically set by the selected cloud solutions provider. For example, service providers like AWS Lambda impose limits on concurrent executions. Therefore, understanding the scalability boundaries set by your chosen provider is crucial to plan your software development strategy.

In conclusion, serverless technology brings numerous advantages to the table. Yet, it's essential to separate the wheat from the chaff before opting for this model. By shedding light on the above misunderstandings, you are better positioned to appreciate and utilize the real potential that serverless technology brings forth.

Hands-on with Serverless: Building Your First Function

In this chapter, we will delve into the practical aspects of serverless architecture by guiding you through the process of building your first serverless function. This hands-on approach will help you understand the concepts we've discussed in the previous chapters and give you a taste of what it's like to work with serverless architecture.

Understanding the Basics

Before we start, it's important to understand that a serverless function, also known as a Lambda function in AWS, is a piece of code that performs a specific task. This function is event-driven, meaning it only runs when triggered by an event such as a user request or a system update.

Setting Up Your Environment

To build your first serverless function, you'll need to set up your development environment. This involves installing the necessary software and tools, including:

- A text editor or Integrated Development Environment (IDE) for writing code. Examples include Visual Studio Code, Atom, or Sublime Text.

- The AWS Command Line Interface (CLI), which allows you to interact with AWS services from your command line.

- The Serverless Framework, an open-source tool that simplifies the process of building and deploying serverless applications.

Creating Your First Serverless Function

Once your environment is set up, you can start building your first serverless function. Here's a step-by-step guide:

1. Initialize a new Serverless service: Use the Serverless Framework to create a new service. This will create a new directory with a serverless.yml file, which is the configuration file for your service.

serverless create --template aws-nodejs --path my-service

2. Define your function: Open the serverless.yml file and define your function. This involves specifying the function name, the runtime (the language your function is written in), and the handler (the file and method that will be executed when your function is called).

functions:

hello:

handler: handler.hello

3. Write your function: Create a new file named handler.js and write your function. In this example, we'll create a simple function that returns a "Hello, World!" message.

module.exports.hello = async event => {

return {

statusCode: 200,

body: JSON.stringify(

{

message: 'Hello, World!',

},

null,

2

),

};

};

4. Deploy your function: Use the Serverless Framework to deploy your function to AWS. This will create a new Lambda function in your AWS account.

serverless deploy

5. Test your function: Once your function is deployed, you can test it by invoking it using the Serverless Framework.

serverless invoke -f hello

If everything is set up correctly, you should see a "Hello, World!" message in your command line.

Wrapping Up

Building your first serverless function is an exciting step towards embracing the serverless architecture. Remember, the key to mastering serverless is practice and exploration. Don't be afraid to experiment with different functions and services, and always keep learning. In the next chapter, we'll explore different serverless frameworks to help you choose the best one for your needs.

Exploring Serverless Frameworks

Ever-evolving tech advancements, especially in realms like cloud computing, have significantly reshaped how software applications are developed, maintained, and launched. Serverless computing lies at the forefront of this shift, bringing about considerable modification in the software development landscape. The introduction and expedited incorporation of custom-tailored tools, explicitly constructed for serverless operations, are driving this change.

Distinguishing Features of Serverless Tools

Contemporary serverless tools bring an elevated protective layer that outdoes traditional counterparts, thereby advancing the workflow accommodated by cloud offerings. These tools allow developers to minutely customize their applications - a characteristic that facilitates efficient management and version control parallel to standard codes. This progress illuminates the arrival of an era dominated by systematic, reproducible approaches that target proficiently distributing software applications across diverse sectors and multiple cloud systems.

Additionally, these tools offer an all-inclusive approach to handle various components within a cloud system, covering data storage devices, database systems, and APIs. This trait works favorably for software developers, empowering them to streamline their application delivery procedure across numerous serverless-optimized platforms like AWS, Google Cloud, and Azure, among others.

Foremost Serverless Tools

Several serverless tools have made their mark within the marketplace, each boasting distinct strike points. These comprise:

- The Serverless Framework: Known for its synchronous functioning with numerous cloud services and user-friendly UI, software developers find this tool extremely advantageous. A robust plugin environment and a dedicated community make it significantly distinctive.

- AWS Serverless Application Model (SAM): An AWS product, SAM streamlines the portrayal of AWS resources and conveniently integrates with other AWS services. Its singular downside is its exclusive compatibility with AWS.

- Google Cloud Functions Framework: Launched by Google to compete with AWS SAM, this tool guarantees seamless user experience for creating, managing, and deploying Google Cloud Functions. However, its usability is restricted to Google Cloud, rendering it unsuitable for other platforms.

- Azure Functions Core Tools: Azure’s tool not only elevates the local development environment but also supports the establishment, regulation, and dispensation of Azure Functions through a Command-Line Interface, offering an efficient answer to serverless needs.

- Zappa: A trailblazer tool, Zappa is extensively advantageous for Python-based applications. It accelerates deployment on AWS Lambda and API Gateway.

- Claudia.js: Custom-built for Node.js applications, this tool simplifies AWS Lambda function creation, deployment, and management, thus obviating unnecessary complications.

Approach to Choose the Correct Serverless Tool

Choosing the appropriate serverless tool depends on multiple factors like cloud service preference, coding language, and project-centered needs. Major considerations encompass:

- Determining the cloud service: If a particular cloud service provider is the favored one, then it's pragmatic to choose a tool that is fine-tuned for that provider.

- Programming language of choice: Certain tools are exclusively designed for specific programming languages -- for example, Zappa is perfected for Python-focused applications, and Claudia.js is an ideal fit for Node.js based applications.

- Community aid and Plugin assortment: Robust community backing and an array of plugins provide expanded functionalities and quick problem solving.

- Ease of use: For those embarking on the serverless journey, choose a tool that is straightforward to use and navigate.

In a nutshell, serverless tools are gaining momentum for their ability to simplify app development by facilitating the formation, introduction, and monitoring of serverless applications. Focus on honing your coding abilities rather than struggling with infrastructure management by choosing the right serverless platform. It's undeniably advantageous to pick a tool that satisfies your exact needs and propels your serverless computing voyage.

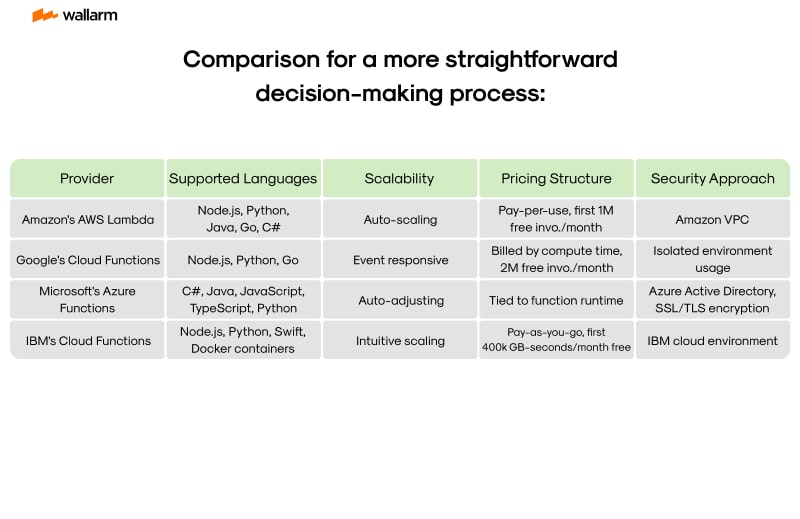

Navigating Serverless Vendors: A Comparative Analysis

Narrowing down the selection from a vast array of serverless architecture vendors is a critical task. We will examine some key contenders in this sector: Amazon's AWS Lambda, Google's Cloud Functions, Azure Functions from Microsoft, and IBM-specific Cloud Functions.

Amazon's AWS Lambda

As a prominent figure in the realm of serverless computing, AWS Lambda supports languages such as Node.js, Python, Java, Go, and C#. Its integration with other AWS services makes it a reliable choice for businesses already utilizing AWS.

- Scalability: AWS Lambda conveniently adapts your apps to incoming traffic flow without your direct intervention.

- Pricing: AWS Lambda utilizes a pay-per-use model, with the first million requests per month being free.

- Security: Your codes are deployed securely in an Amazon Virtual Private Cloud (VPC) with AWS Lambda.

Google's Cloud Functions

Noted for its adeptness, Google Cloud Functions allows you the flexibility to create compact, objective-focused functions. It provides robust support for Node.js, Python, and Go.

- Scalability: Google Cloud Functions possess an inherent mechanism that accommodates various event triggers.

- Pricing: Billed on computation time, the bonus lies in the 2 million invocations provided free of charge per month.

- Security: By running its functions in an isolated environment, Google Cloud Functions amplifies its security measures.

Microsoft's Azure Functions

Azure Functions, Microsoft's serverless offering, supports a wide array of languages including C#, Java, JavaScript, TypeScript, and Python.

- Scalability: Azure Functions features inherent scalability, adjusting to match the demand.

- Pricing: Azure Functions’ pricing model is directly tied to the function's runtime.

- Security: Azure Functions is built with multiple security layers including Azure Active Directory and SSL/TLS encryption.

IBM's Cloud Functions

Drawing from Apache OpenWhisk's open-source serverless framework, IBM has introduced Cloud Functions. This solution is compatible with Node.js, Python, Swift, and Docker containers.

- Scalability: IBM Cloud Functions intuitively and readily adapts to manage demands.

- Pricing: The pay-as-you-go format of IBM Cloud Functions grants a complimentary provision for the first 400,000 GB-seconds of use each month.

- Security: IBM Cloud Functions ensures a secure execution environment within its cloud for all actions.

Here's a concise comparison for a more straightforward decision-making process:

In conclusion, selecting a serverless technology provider depends on your specific set of requirements: preference for programming languages, scalability needs, budgetary limits, and security standards. It is vital to carefully assess each provider before finalizing your choice.

Closing Thoughts: Is Serverless Right for Your Business?

Our detailed study is drawing to a close, and it's time to consider an urgent question - Does implementing a serverless architecture improve your business strategies? This question cannot be answered with a mere 'yes' or 'no' as it weaves into various factors such as your business’ specific operations, unique needs, and willingness to embrace pioneering work models.

Business Parameters Assessment

Establishing whether your enterprise will benefit from a serverless architecture starts with an evaluation of your unique needs. Businesses involved in designing and managing applications that call for rapid scalability in response to the needs of users have the potential to gain significantly by switching to a serverless system.

On the flip side, if you operate applications that experience steady, predictable demand or require ongoing processes, traditional server-based systems might be a more suitable solution. Serverless architecture thrives in scenarios where user traffic is volatile or experiences sudden spikes. This is due to its capacity for automatic scaling and cost-effectiveness that stems from its pay-per-use pricing model.

Technological Mastery Evaluation

Transitioning to a serverless platform hinges on your tech competence. It requires technical knowledge in areas such as Serverless Functions (FaaS), event-based programming, and stateless computation.

If your team already possesses the above-mentioned skills, transitioning to serverless might be less challenging. Otherwise, upskilling or recruitment of skilled staff might be necessary.

Objective Appraisal of Pros and Cons

Our previous discussions reveal that serverless architecture comes with benefits like scalability, cost-effectiveness and decreased operational overhead. However, it also poses challenges like cold starts, dependency on single providers, and obstacles in tracking down and fixing issues.

Conducting a balanced analysis of the pros and cons is essential. For some businesses, the positive aspects of serverless platforms could exceed the negatives. However, for others, the transition may bring insolvable difficulties.

The Financial Influence Highlight

Considering the financial implications of moving to serverless systems is crucial. While serverless can provide cost savings when compared to traditional server-based systems, especially for apps with fluctuating traffic, the economic benefits are not a guarantee.

There could be additional costs associated with adapting your software for serverless and staff capacity development. Furthermore, even though serverless’ pay-per-use model could save money, unanticipated overages can lead to cost overruns.

Projecting the Path Forward

Taking into account the projected evolution of serverless systems is of paramount importance. As indicated previously, the serverless field is swiftly advancing, offering an array of new tools, frameworks, and services. Embracing serverless could put your business at the forefront of this burgeoning field.

Nonetheless, inherent risks accompany any evolving technology. Progression could trigger unpredictable alterations in serverless technologies, or might pave the way for an even more advantageous technology.

In conclusion, the choice to adopt a serverless architecture is informed by a multifaceted combination of your unique needs, tech competency, and fiscal strength. Although this decision is far from trivial, this shift could possess a transformative potential, propelling your enterprise towards a fresh epoch of operational peak performance.

Additional Reading: Resources for Further Study on Serverless Architecture

With serverless technology innovation rapidly evolving, understanding its intricacies becomes critically important. Our selection of insightful educational content, coupled with real-world application examples, is designed to bring you up to speed in this field.

Must-Read Serverless Publications

- "A Journey Through AWS Serverless Landscape" by Peter Sbarski and Sam Kroonenburg: This encompassing guide enables you to develop a broad understanding of AWS's serverless solutions, paying particular attention to vital security aspects and diverse framework exploration.

- "Deciphering Serverless Architectures: Grasping Core Concepts and Methodologies" by Brian Zambrano: This comprehensive narrative spotlights the complexities of serverless designs, equipping you with practical and conceptual skills needed in effective serverless solutions planning.

- "Dominating Google Cloud Run – Embracing Serverless Superiority" by Marko Lehtimaki and Janne Sinivirta: This indispensable text unpicks the essence of Google Cloud's serverless avenues, acting as a wide-spectrum guidebook for Google Cloud Run.

Handy Tutorials and Tactical Guides:

- Certified AWS Programs and Diplomas: AWS-designed educational tools and credentials tailored for different skill levels.

- Mastering The Serverless Framework Guide: The accredited training manual to become proficient in the Serverless Framework, replete with in-depth processes, introductions, and API evaluations.

- Google Cloud's Knowledge Hub: An extensive collection of serverless learning content from Google, offering a mix of free and premium resources.

Informative Blogs and Interactive Web Forums:

- Elevated Cloud Learning: An esteemed digital space in the cloud learning community, featuring a cornucopia of cloud-centric resources, spotlighting serverless technologies in continuous editorials.

- Winning at Serverless: An invaluable online community offering meticulous guides to optimize serverless setups, supported by real-world scenarios and professional recommendations.

- CloudSphere Chronicles: A comprehensive repository of topics related to cloud technologies, featuring predominantly serverless insights. Besides articles, CloudSphere Chronicles hosts podcast episodes and showcases various digital innovations.

Educational Initiatives and Collaborative Digital Platforms:

- Serverless Roundtable: A conversation-generating movement initiated by Jeremy Daly, designed to stimulate insightful chats with sector experts on various serverless matters.

- Serverless Headlines: A podcast series highlighting emerging patterns and advancements in serverless technologies.

- Digital Tech Talks by AWS: Virtual gatherings on various subjects facilitated by experienced AWS proponents, predominantly drawing attention to serverless developments.

Academic Papers and Real-world Deployment Narratives:

- "The Economic Impact and Design Considerations of Serverless Systems": A scholarly document providing an integrated exploration of the cost implications and design issues associated with serverless deployments.

- Success Stories from AWS: Factual narratives showcasing how serverless technology can be effectively deployed across different business sectors, as shared by AWS.

The commitment to ongoing learning is key to mastering serverless technology. Leverage these materials to chart your course in the serverless universe and tap into its inherent potential.

Subscribe for the latest news