Container Orchestration?

Nowadays, software deployments need to be frequent and quick in order to stay competitive. Microservices architecture helps firms split down monolith software into smaller pieces, decreasing the risk of breaking crucial mechanisms during fast categorization. A container orchestration (CO) solution is required to supervise these microservices, with more of them connected. The orchestrator ensures that all of them are functioning and can communicate.

Read ahead to uncover an in-depth detailed guide.

What Is Container?

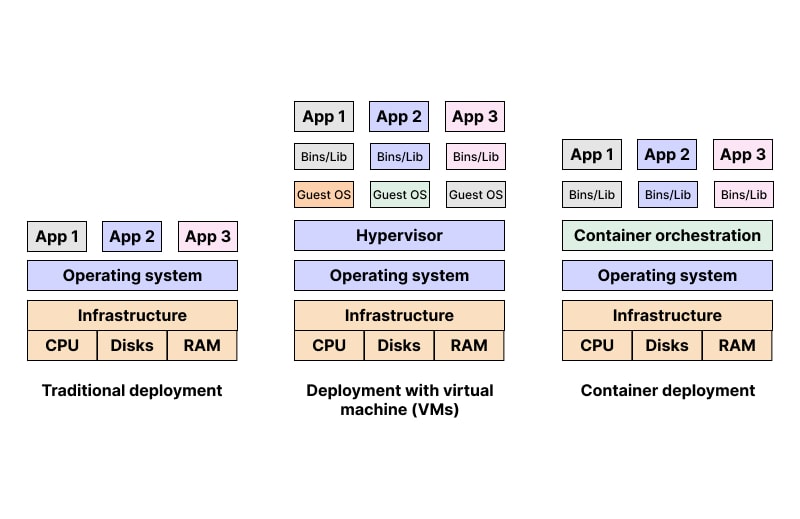

The container orchestration definition states the method of OS virtualization. A single container could host anything from a single MS or freeware process to an entire application system. It contains the program's executables, binary code, libraries, and settings. But they don't have OS images like other virtualization methods for servers and machines do. This reduces their bulk and weight, making them easier to transport. They can be deployed as a cluster in a bigger software arrangement. A CO like Kubernetes would be utilised to oversee the operation of such a cluster.

An overview of Container Orchestration

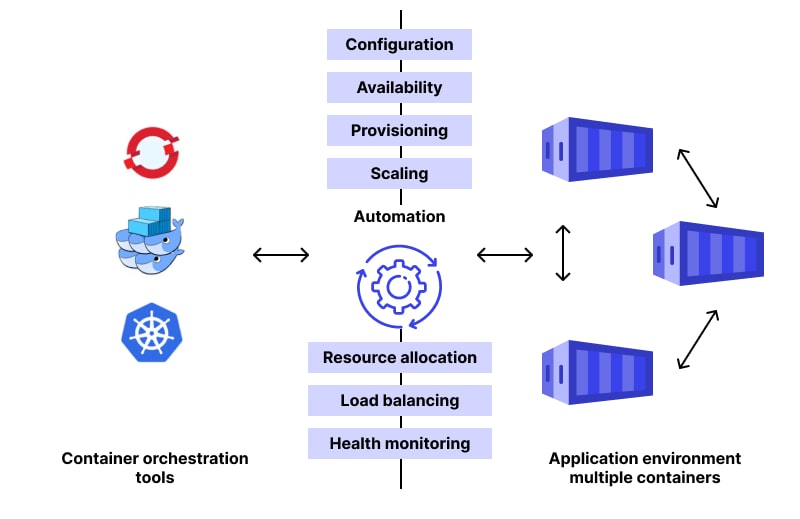

Automation of CO tasks like scheduling, categorization, structuring, scaling, and health monitoring and administration is possible. They are complete software that includes all the codes, libraries, assurances, and system devices mandatory to run on several computer and disk drive configurations. Although containers have been in some form since the late 1970s, there has been a significant evolution in the technologies used to build, manage, and protect them.

Time had progressed from when we only built single-tier monolithic programs that ran on a single platform. Today's developers can pick and choose among microservices, containers, and artificial intelligence devices, all of which can be arrayed in the managed cloud or in a hybrid arrangement using on-premises resources.

They are used by network development companies for command, control, and mechanisation of several tasks, counting the following:

- Managing Containers' provisioning and placement.

- Continuity of service and software package redundancy.

- Controlling the amount of work an application does by adding or removing containers from a host.

- Migration from one host to another in the event of a host failure or resource scarcity.

- Resource sharing among software packages.

- Exposing containerized services to the external world.

- Balancing the load of service discovery across containers.

- Keeping tabs on the status.

- Alignment of freeware.

Why Is Container Orchestration Needed?

Physically or with straightforward scripting, you can accomplish container management errands with a small number of system packages. However, this gets increasingly challenging as the number of containers grows. As a result, this is a prime use case for CO security software.

Other tools, such as Kafka, can be used to facilitate communication between containers.

System administrators and DevOps can employ CO to manage massive server farms housing thousands of containers. If there were no such thing as CO, everything would have to be done manually, and the situation would rapidly become unsustainable.

How Does Container Orchestration Work?

When it comes to CO, the procedure can vary depending on the tool used. Applications' configurations are often described in YAML or JSON files, which are exchanged between container orchestration tools. The container orchestration tool relies on composition data to determine how and where to get container pictures, set up networking between containers, save log records, and mount depository volumes.

Deployment of containers into clusters is scheduled by the CO tool, which also automatically selects the best host. Following the selection of a host, the container's lifetime is managed by the CO tool in accordance with the parameters specified in the container's definition file.

Tools for orchestrating containers can be used in any setting where containers can function. Kubernetes, Docker Swarm, Amazon Elastic Container Service (ECS), and Apache Mesos are just a few of the many companies that offer CO functionality.

With this configuration file, you can:

- Determine which container pictures make up the app.

- Point the system in the direction of where the images can be found, such as Docker Hub.

- Equip the containers with the required components (such as storage).

- Connectivity between containers must be defined and protected.

- Help the user by pointing out the location of logs and the procedure for mounting storage volumes.

Multi-Cloud Container Orchestration

When employing a multi-cloud strategy, you utilize cloud services from many vendors. Instead of running on a single infrastructure, multi-cloud CO allows containers to travel freely between different cloud providers.

Although there are use cases where the complexity of setting up container orchestration across various providers outweighs the benefits, in most situations the effort pays off.

- Enhanced infrastructure functionality.

- Possibilities for optimizing cloud costs.

- Higher adaptability and portability of storage containers.

- Reduced threat of vendor lock-in.

- Additional scalability options.

Containers with numerous clouds are a perfect match. Their portability and run-anywhere characteristics complement the use of manifold environments, and containerized infrastructure liberate the full potential of utilizing several managed cloud ways.

Container Orchestration and Microservices

Assuming you are familiar with how both these elements function, we can now turn our attention to microservices. Since container instrumentation solutions are designed to function best with freeware that adheres to basic MS concepts, it is vital to grasp the notion of microservices. Now, this doesn’t mean that you can only practice container orchestration platforms with the “greatest rationalized” microservice submissions. The platform will still correctly handle capacities in the end, but you will notice the greatest "upheaval" when your architecture is also enhanced.

With microservices, you can make changes to the software by testing and redeploying just one of the modules. This facilitates more rapid and straightforward modification. One of the numerous benefits of microservices is that they allow the system to be divided up into lesser, more controllable fragments.

Consecutively many containers across multiple servers need a degree of DevOps resources that your business might not be ready to give, regardless of whether you deploy it on bare metal or within virtual machines. Orchestration bridges this gap by providing a number of services to developers that improve their capacity to monitor, plan for, and oversee several others in production.

The paybacks of integrating microservices instrumentation into your design comprise:

- Facilitates handling of a situation with numerous variables.

- Shows you when to start the right containers.

- Allows containers to communicate with one another.

- Guarantees that your entire system is always up and running.

If your firm is adopting a CO strategy, instrumentation technologies can importantly streamline operations.

The Benefits of Container Orchestration

They offer a simpler method to the course of formatting, challenging, arraying, and redistributing applications across a variety of surroundings, ranging from the local laptop of a developer to an on-ground info center and even the cloud.

There are many compensations for consuming them, including the following:

- Better application creation

They speed up every stage of the app creation process, from prototyping to production. It is easy to implement brand-new versions with brand-new structures in creation, and just as easy to revert to an older version if necessary. As a result, agile settings are perfect for their usage. Due to the transferability of its freeware, DevOps procedures become more effective.

- Simplified application installation and mobilization

Because everything related to the application is contained within containers, application installation is simplified. They make it possible to quickly and easily start up new instances whenever there is a requirement to scale up as a result of increased demand or traffic.

- Lower resource requirements

They do not include operating system pictures, thus, they are lighter than typical applications. They are also considerably simpler to administer and maintain compared to VMs, which is a significant benefit if you are already working in a virtualized surroundings. Their instruments can assist with the administration of resources.

- Reduced expenses

Even in settings that do not make use of any kind of CO, it is possible to accommodate multiple containers. When compared to businesses that do not use CO software, those that do have a greater number of containers residing on each host.

- Improved productivity

Containers already enable rapid testing and categorization, as well as patching and scalability. The use of tools for CO makes these operations even more efficient.

- Enhanced safety

Applications and their operations are encompassed within their own containers; software safety is significantly improved. In addition to this, the utilization of CO tools guarantees that only particular resources are shared between users.

- Ideally suited to a microservices architecture

Traditional applications are almost always of a monolithic character; that is, they consist of components that are strongly related to one another and work concurrently to carry out the functions for which they were developed. Microservices are defined by the disjointed nature of its respective constituents, with the ability of each service to be scaled or adjusted separately. Containers were explicitly created for the categorization. CO makes it possible for containerized instruments and devices to collaborate with others in a more seamless manner.

Best Container Orchestration Tools

The CO technologies offers a framework for the scalable administration of microservices architecture and individual containers. The four best CO tools are briefly discussed below:

Kubernetes

It has been quite successful in recent years as an open-source CO technology and API security platform, and it has garnered a lot of attention. Programmers are given an easier time when it comes to the creation, scaling, scheduling, and keeping track.

Kubernetes offers numerous advantages over other competing management systems, such as its extensive feature set, its active programmer community, and the advent of cloud-native app expansion. There are a number of benefits to using this technology, including the fact that it is very adaptable and portable, allowing it to be deployed in a variety of settings and integrated with other systems like service meshes.

Additionally, it has a high level of declarativeness and enables the automation that is essential to CO. It is "explained" by developers and system administrators to represent the desired configuration of a system. After that, it will do a dynamic implementation of that state.

Docker Swarm

Docker Swarm is comprised of two primary components, namely the management and the worker nodes. The manager node is responsible for delegating duties to the worker nodes, who are then responsible for carrying out those activities.

Docker swarm makes use of a declarative paradigm that is managed by Docker and allows users to describe the state they want their cluster to be in. Aside from that, Docker possesses capabilities such as cluster management through the use of the docker engine, multi-host networking, high levels of security, scalability and load balancing, and rolling upgrades.

Apache Mesos

Another open-source solution for cluster administration is called Apache Mesos. It operates between the application layer and the operating system, which simplifies and expedites the process of installing and administering applications in large-cluster systems.

Mesos is the first open-source cluster management service that can handle a workload in a distributed environment using dynamic resource sharing and isolation. Isolation refers to the process of separating a single program from all other processes that are currently active. Mesos was developed by the Apache Software Foundation.

Mesos gives apps with the available resources on all of the machines in the cluster and often updates to add resources that completed applications have freed up. Mesos also provides applications with the available resources on all of the machines in the cluster. This enables apps to make the most informed decision possible regarding which task to execute on which computer.

Nomad

Nomad is a straightforward container orchestrator that just requires a single binary to run. Configured containers can be deployed with the help of a declarative infrastructure-as-code.

Nomad simplifies container deployment and management across several infrastructures, including the cloud and on-premises servers. It's advantageous in many ways, including its ease of use, dependability, high scalability, and compatibility with other systems (such as Terraform, Consul, and Vault).

Conclusion

Container orchestration is the automation of container lifecycle management in high-volume, ever-changing environments and API security. It has greatly influenced the speed, agility, and efficiency with which developers can deliver applications to the cloud. In particular, businesses have complicated security and governance needs that must be easily adopted and enforced by straightforward process rules. Container orchestration can be an incredibly useful method for running containers at scale, especially when combined with resource management and load balancing. This allows many businesses to increase their productivity and expand their operations.

Subscribe for the latest news