What Is Canary Deployment? Meaning, Strategy

Synthesized experiments alone could sometimes not be sufficient to discover issues because a CI context differs from a production ecosystem. Hence, some troubles aren't discovered until they're interfering with production, at which stage damage has already been done. So, Agile development has altered the process by which we build software.

Canary Deployment is a part of the modified approach developers follow today. In this process, the latest version/relaease of an application is supplied while the old one is still being used. The upgrade is initially introduced to a small number of servers before being tested and rolled out to the other servers.

Learn about this latest method in detail by reading this article.

What Is Canary Deployment?

Though it uses a bit of a unique technique, Canary deployment works just like blue-green method. In that, it only switches over a portion of servers or nodes at once, finishing the rest after they have all been switched over rather than delaying the switchover of another full environment deployment until it is finished.

When it comes to implementing canary deployments, you can configure your staging/testing environment in various ways. The simplest method is to set up your ecosystem behind your load balancer as usual, but keep one or more extra, unused servers or nodes (depending on the size of your application).

This spare node or server group is the target of your deployment for the CI/CD process. After you build, deploy, and test it, you add this node back into your load balancer for a small length of time for a brief audience. This allows you to verify that the changes were effective prior to performing the same procedure on the other nodes in your cluster.

The alternate approach to setting up a canary deployment involves using the feature toggles development pattern. You are utilising a feature toggle when you create and introduce modifications to an application that is managed by a configuration that activates those changes (also known as a feature flag).

You can remove a node from your cluster, deploy it, then add it back in without employing the load balancer to test or manage anything. Once all the nodes have been updated, the function is then turned on for a small group of users before being made available for all.

The disadvantages of this technique include boosted cost time and money in creating your application to handle feature toggles. The age & scale of your application will determine how tough or impossible it will be to provide this functionality.

Canary Deployment vs Canary Release

If you're thinking about what is canary release meaning, let’s first talk about it.

An application that has been distributed to consumers in its entirety but is known to be unstable or to have brand-new, untried functionality is called a "canary release."

An alpha, beta, or early access version is occasionally used. The stable and development branches are frequently kept apart in the open-source community to encourage user experimentation with new releases as they develop.

Mozilla Firefox, which delivers a nightly beta version, and Google Chrome are two examples of programs that make use of canary releases. Google Chrome includes a channel for canaries that allows early access to new versions.

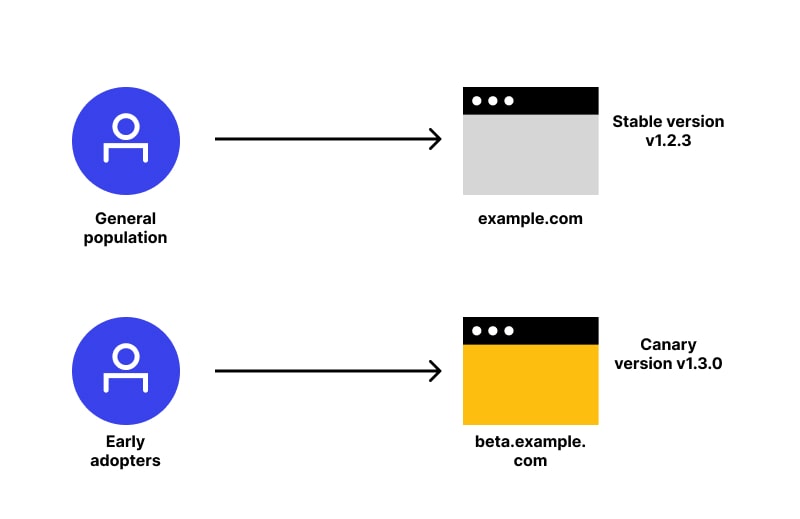

On the contrary, canary deployment is the process of creating staged releases in software engineering. A select subset of users receives a software update first, allowing them to test it and provide comments.

After the modification is approved, the remainder of the users receives the update.

Lastly, it's a top-notch software development strategy, where a brand-new version of API is released as API gateway canary deployment.

How do Canary Deployments Work?

Canary deployments entail running two versions of the program simultaneously. The outdated version will be referred to as "the stable", and the new version as "the canary." Rolling and side-by-side deployments are the two methods for releasing the update.

Let's examine their operation:

- Side-by-Side Deployments

Blue-green deployments and the side-by-side method are very similar. The canary version is installed in a brand-new duplicate environment that is created instead of upgrading the machines incrementally.

Imagine that the application uses a database, a few services, and several computers or containers. Now, the next steps will be:

- Install the upgrades and clone the hardware resources before making the deployment.

- After operating in the new environment, demonstrate the Canary to a subset of the user population. Typically, a router, load balancer, reverse proxy, or other business logic in the application is used for this.

- Watch the Canary as we gradually move more and more people away from the control version, just like with rolling deployments. The process is repeated until an issue is found or all users have switched to the Canary.

- Eliminate the control environment after deployment is finished to free up resources. The current stable version is the Canary.

- Rolling Deployments

When you release modifications in waves or stages — a few PCs at a time — it'll be called rolling deployment. The rest still utilizes the stable version. A canary deployment can be done in the simplest manner possible.

- A few users start receiving updates as soon as the Canary runs on one server.

- Solution owners monitor how the updated machines are performing while this is going on. It is a good idea to look for mistakes and issues with performance while also listening to user comments.

- One must continue installing the Canary as they gain confidence in it on the other machines until every one of them is running the most recent version.

- The modification can be reversed by returning the updated nods or servers to their original condition if you notice a problem or get substandard results.

However, the following describes how such Deployments work:

- At start, the current version receives all user traffic.

- Why 95% of users continue to use the prior version while the "canary" deployment is carried out using brand-new pods and very little traffic, for example, 5%.

- The new version can be put through various testing (such as smoke tests) without affecting most customers.

- The canary/subset of traffic at any given time is determined by an automated decision-making process.

- The procedure is repeated for other canary traffic percentages (for example, 5%, 25%, 50%, 75%, and 100%) in case the new version works as anticipated. If not, a higher amount of live traffic will be routed to the most recent version.

- Deploy the canary if any point in the canary traffic encounters issues with the latest version, the canary deployment Kubernetes service is reverted to the earlier release. Most users will be hardly impacted. After the canary version is exterminated, everything returns to as it was.

- The previous version may be deleted once the new one has received all traffic.

Canaries' History

The canaries were essential to the history of British mining because they were employed to detect potentially dangerous compounds like CO before they might affect people (the canary is more vulnerable to airborne toxins than humans in software deployment, and the term "canary analysis" serves a similar purpose).

DevOps engineers carry out this kind of study to see whether their new release in the CI/CD process would pose any problems for the firm, just like Canary informed miners of any problems with the air they were inhaling.

Advantages of Canary Deployments

- Simple rollback

The deployment is immediately rolled back if any significant issues are discovered. It just needs to be done by altering a feature flag or reverting traffic to the earlier version.

- Capacity Testing

When implementing a new microservice to replace a legacy system, it's beneficial to test in a production ecosystem how much capacity you'll require. You can calculate how much space you'll need to add to the system in order to expand it to its maximum capacity by installing a canary model and verifying it on a small subset of users.

Canary deployment and Kubernetes

As everyone is using Kubernetes, the procedure for performing canary installations is comparable to that utilized by AWS; however, we use different terminology.

For pods that are "registered" to your service, you have a service that could serve as a load balancer. There are also "deployments" that function similarly to ASGs.

Disadvantages of Canary deployment

Since nothing is foolproof, such a deployment also has some drawbacks, including the following:

- Costs

Due to the additional infrastructure needed for a side-by-side canary deployment technique, costs are higher. Utilize the cloud to your advantage by adding and eliminating resources as necessary to cut costs.

- Intricacy

Here, the deployment method is very similar to blue-green installations. The management of several manufacturing machines, user transfers, and upkeep of the new system are arduous obligations in its case.

What is blue-green deployment?

It separates your solution’s infrastructure into two equally resourced zones, termed "Blue" and "Green." The former zone (Blue) utilizes your load-balancers to control half of the current application’s traffic. After that, your new app/solution can be deployed to the other half of your ecosystem (Green).

During green zone’s testing and deployment, your load balancers will reroute traffic. It won’t modify the blue environment and ensures that it is always available for production clients.

After widespread app deployment and testing, you can implement your load-balancer to take care of your green environment without your consumers noticing a change.

Canary Deployment vs Blue Green

In simplest terms, Blue Green Deployment vs Canary difference is:

- Canary deployments are used to test a brand-new feature in a production environment with less risk.

- Blue-green deployments are primarily employed to eliminate downtime from a process.

There are many things to muse on, and no such solution fits every situation. You should generally consider binary blue-green deployments if any of the following statements are accurate:

- Everyone has faith in the updated version. Since the code has undergone extensive testing, it is believed the failure likelihood is quite low.

- Working in brief, secure iterations.

- All users must be switched in one go.

Canary is most likely a superior option when:

- You are quite optimistic about the new version.

- The performance or scaling concerns are major.

- A significant update is being made, such as adding a brand-new or unknown function.

Components of Canary Deployment Planning

- Stages

- Duration

- Metrics

- Assessment

When is it not possible to use Canary deployment?

Not everyone can use such deployments. In other words, you can't utilize it when:

- A failure has disastrous economic repercussions, like banking systems.

- There is no way to remotely update the software set up on the user's PCs.

- Working in fields where continuous deployments are prohibited, such as the aerospace or medical industries.

- You must significantly alter the database or storage backend.

- You're working with life-sustaining or mission-critical systems, such as programs for electricity networks or nuclear reactors.

Conclusion

The canary deployment strategy is favored because it lowers the risk of integrating changes into production while less infrastructure is needed. Businesses can test the new version in a practical situation by utilizing a canary release instead of revealing all users simultaneously.

Automation and consistency of deployment are equally crucial if you want there to be no downtime when updating and changing your environments. It is quite beneficial to use your CI/CD pipeline to control the automatic deployment, testing, and cutover of application environments.

In conclusion, such a deployment is not as difficult as you might assume! There are numerous ways to carry it out, but Kubernetes could feel the most natural. After automating everything, you can deploy more quickly with greater confidence. Deploying the apps using this deployment approach will therefore result in 0 downtime for your business.

Subscribe for the latest news