What Is an Ingress Controller? Explained by Wallarm

Ingress is a k8s API object. It enables clusters' services to communicate with external traffic or serve the incoming requests as required. Here, in the case of production-grade solutions, external traffic generally comes through HTTP/HTTPS in general.

As this communication can make or break things for your Kubernetes (or other types of containerized) applications, you must have extensive knowledge of Ingress and k8s-Ingress Controller before anything else. Read this article to acquire the same.

An Introductory Guide to Ingress Controllers

In the complex sphere of system administration, a crucial instrument emerges. This tool is none other than the 'essential network guidebook’. This meticulously designed handbook drastically augments user interactions within intricate network configurations. Let’s delve deep into this indispensable tool to gain a more comprehensible understanding of its various applications.

Inspecting the Fundamental Tasks

These essential network guidebooks, casually recognized as Kubernetes' silent partners, carry out their dutiful roles with exceptional precision. In tandem with Kubernetes, which ignites a new era in the automatic configuration, adaptability, and management of applications centered around containers, these vital components garner increasing recognition. The primary function they fulfill includes the seamless handling of incoming application requests while communicating with Kubernetes—akin to proficient traffic directors maneuvering user queries along the network lanes.

Interpreting the Essential Network Guidebook's Functionality

Imagine the essential network guidebooks as maestros managing a symphony concert to grasp the scope of system traffic supervision comprehensively. These guidebooks monitor the external dialogues with functions nestled within the cluster, predominantly via HTTP protocols. By harnessing the pre-established tactics of the guidebook's resources, they process and fulfill user requests, thus ensuring a smooth user experience.

The Unquestionable Influence of Essential Network Guidebooks

Essential network guidebooks indeed mark a significant footprint. They act as a singular route to a Kubernetes cluster—intensifying the monitoring of system traffic and bolstering protection measures. Besides, they also carry out vital functions like traffic rerouting, SSL offloading supervision, domain-centric virtual hosting, and more.

Simplifying Processes Utilizing Essential Network Guidebooks

The operational schema of an essential network guidebook is built around pre-ordained routing rules. These instructions outline the interaction pathways for incoming connections with the cluster's services. Once these rules are activated, guidebooks spring into action, synchronizing their responses with commands from load distribution or reverse-proxy servers and channeling traffic accordingly.

Snapshot of Essential Network Guidebooks

| Unique Attributes | Explanation |

|---|---|

| Unified Entryway | Essential network guidebooks serve as a consolidated access point to a Kubernetes cluster, thereby optimizing traffic supervision |

| Traffic Channeling | These tools skillfully distribute incoming traffic amongst different pods, thereby maintaining steady service availability. |

| SSL Unloading | Essential network guidebooks excel at SSL offloading, alleviating the workload on backend servers. |

| Support for Multiple Domains | These traffic coordinators support several websites with unique domain names under a single IP address. |

In conclusion, essential network guidebooks effectively optimize the management of incoming requests within a Kubernetes setup, bolstering system productivity, strengthening security, and providing a multitude of additional benefits. To truly fathom the particulars, inherent traits of network guidebooks, and their impact on system traffic, further exploration of their architectural framework is required.

The ABCs of Ingress Controllers: What Are They?

Undeniably, Kubernetes and web routing mechanisms offer extensive and intricate landscapes. There's one critical component that resides in this landscape - the ingress controller. Essentially, breadcrumbs your server traffic, leading incoming network data to appropriate services in a cluster. Now, let's delve into the ingress controller's role and function, breaking it down into simpler terms.

A Closer Look at Ingress Controllers

Imagine the ingress controller as an electronic waypoint that maneuvers web traffic within Kubernetes architectural design. Its proficiency lies in validating services depending on the URLs of incoming HTTP and HTTPS requests.

Kubernetes - a versatile, open-source platform - is built to automate the deployment, scaling, and managing of containerized applications. Within this domain, the ingress controller assumes a crucial role. Standing tall amongst Kubernetes’ various governance tools, the Ingress tool plays a vital part, with the majority of its functions being driven by the ingress controller.

Understanding Ingress Controllers Performance Strategy

The ingress controller operates by diligently reviewing the particulars mentioned in the Ingress Resource. It then decides the service designated to handle the traffic, the routes to follow, and the SSL/TLS credentials. Employing this information, the ingress controller steers the traffic pouring into the Kubernetes system.

Here's a tabulation that outlines the functions of an ingress controller:

| Initiative | Outline |

|---|---|

| Traffic Management | Guides incoming traffic to appropriate services within a Kubernetes system. |

| Load Distribution | Disperses network traffic amongst various servers to prevent server congestion. |

| SSL/TLS Supervision | Manages the SSL/TLS protocol efficiently, delegating the workload from backend servers. |

| Virtual Hosting based on Hostname Allowance | Allows multiple hostnames to operate under a unified IP address. |

Varied Flavors of Ingress Controllers

One can find an array of ingress controllers, each bearing unique proficiencies and features. Some widely utilized ingress controllers include:

- NGINX Ingress Controller: With its impressive performance rooted in the NGINX reverse proxy server, it's a go-to pick for many.

- Traefik Ingress Controller: An up-to-date HTTP reverse proxy and load balancer that effortlessly harmonizes with existing infrastructure elements.

- HAProxy Ingress Controller: A complimentary tool providing high availability, load balancing, and proxying for TCP and HTTP-based applications. Celebrated for its speed and reliability.

- Istio Ingress Controller: Istio presents a streamlined way to connect, manage, and secure microservices, offering a fuss-free platform.

- Kong Ingress Controller: Kong stands for a swift, scalable, cloud-native Microservice Abstraction Layer (or API Gateway or API Middleware).

In conclusion, ingress controllers serve as the lynchpin in managing network traffic efficiently within a Kubernetes framework. They undertake several crucial tasks- from channelling traffic and dispersing load to managing SSL/TLS and allowing hostname-based virtual hosting. Comprehending the workings of ingress controllers paves the way for designing an efficient and robust network system.

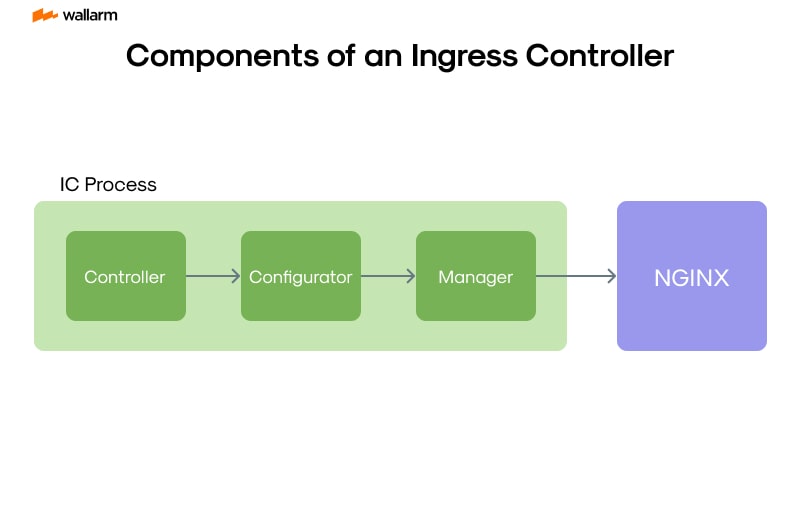

Key Components of an Ingress Controller

Decoding Kubernetes Network Mechanics: An In-depth Analysis of Ingress Controllers

Deciphering the procedures that drive Kubernetes can be compared to solving a complex, multi-layered puzzle. One critical hurdle that needs clearing is the in-depth comprehension of the Ingress Controller – the core system within Kubernetes orchestrating network operations. Investigating this central element can offer insights into the delicate interaction between action implementation and connection analysis in Kubernetes' primary systems and data communication processes.

Essential Networking Helpers: Ingress Entities

In the vast sphere of Kubernetes, Ingress Entities bear immense significance. They operate as the vital cogs within the machinery of an Ingress Controller, simplifying networking processes across a wide array of services hosted in multiple containers. These entities establish a conduit between instances of HTTP and HTTPS data and the functionalities afforded by Kubernetes, while identifying the origins of incoming data flows.

The structural design of an Ingress Entity can be portrayed using either YAML or JSON formats, thus charting a straightforward data path. The forthcoming example illustrates a basic Ingress Entity setup:

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: path-configuration

spec:

rules:

- host: example.domain.net

http:

paths:

- pathType: Prefix

path: "/"

backend:

service:

name: planmaker

port:

number: 443

In the aforementioned example, every request aimed at example.domain.netis connected to planmaker through port 443.

Traffic Directors: Ingress Controllers

An Ingress Controller's duties mirror those of an adept traffic controller, proficiently overseeing data flow. The controller deciphers the instructions placed in Ingress Entities and prepares an environment for data interaction. Commercial solutions like Nginx, Traefik, or HAProxy, present an array of Ingress controller techniques to choose from, matching specific Kubernetes orchestration requirements.

Behind-the-scenes Helpers: Backend Services

Situated within the Kubernetes blueprint are strong services like web servers, APIs, databases, etc. Even though these backend services function behind the curtain, they execute commands directed by the current Ingress. The Ingress Entity functions as a guide, navigating instructions to their proper destinations.

Guard of Network Security: TLS Certification

Beyond traffic management, Ingress Controllers implement Transport Layer Security (TLS) to protect and secure HTTPS connections. They manage HTTPS-oriented requests, decode protected information, and convert it to HTTP-specific data for delivery to the cluster. Working in sync, the Ingress Entity facilitates associating separate TLS certificates with each host, strengthening the Ingress Controller's ability to uphold safety measures.

The Ingress Controller plays a crucial role in interfacing with services within the Kubernetes environment. Its main function highly depends on four strong pillars—the Ingress Entity, Ingress Controller, backend services, and TLS certificates. Collectively, they ensure smooth digital navigation and competent task execution.

The Importance of Ingress Controllers in Network Routing

Maximizing the Potential of Digital Traffic Managers: Entry Doorway Routers

The craft of orchestrating data movement in interconnected systems is expertly achieved by understanding the central function of entry doorway routers - the knights in digital armor. They augment data safety and foster seamless data flow through your interconnected system. If we were to picture these devices, they'd be the gatekeepers of your cyber realm, adeptly directing incoming data flows towards crucial segments of your system's network clusters.

Unpacking the Function of Entry Doorway Routers in Data Exchange Strategies

Let's take a deep dive into the Kubernetes setups. Here, entry doorway routers shoulder the vast responsibility of tending to all data telecommunications. These elements create and maintain both the HTTP and HTTPS routes spanning across various services. The processing is guided predominantly by source and course details laid out in the incoming data packets. Hence, entry doorway routers demonstrate unrivaled expertise in managing the incoming data transport among a multitude of services, guided by the URL pathway. This gives rise to an intricate, bespoke routing structure for network data.

For example, imagine an electronic setup that includes both frontend and backend features. Within such a configuration, entry doorway routers skillfully guide network data to relevant services by deciphering the URL pathway. Suppose a user follows the URL pathway "/frontend" - this prompts the entry doorway router to channel the data transfer towards the frontend service. Conversely, activating the '/backend' URL pathway would prompt the entry doorway router to conduct data transfer to the backend function.

Highlighting the Merits of Entry Doorway Routers in Overseeing Data Flow

Employing entry doorway routers in managing data flow yields multiple convincing advantages:

- Real-Time Data Monitoring: Entry doorway routers promptly examine network data, directing it to the appropriate services following the URL pathway. This elevates the effectiveness and reliability of your network.

- Equitable Data Distribution: Acting as traffic balancers, entry doorway routers distribute network data equally across varied services, thus evading a possible data blockade.

- Advanced Security Protocols: Entry doorway routers reinforce network safety by adding additional security features such as SSL/TLS termination to ward off possible security incursions.

- Decreased Expenses: Quick network data processing and equal distribution among services by entry doorway routers imply reduced resource use, which may translate into cost savings.

Comprehensive Understanding of Entry Doorway Routers' Operation

To enhance clarity on how entry doorway routers function in managing data flow, let's examine a simplified example:

apiVersion: DataPathway.k8s.io/v1

kind: EntryDoorwayManager

metadata:

name: example-EntryDoorwayManager

spec:

rules:

- host: www.example.com

http:

paths:

- backend: MainBackEnd

path: "/frontend"

target:

service:

name: front-line-service

port:

number: 80

- backend: MainBackEnd

path: "/backend"

target:

service:

name: back-line-service

port:

number: 80

In the simplified scenario above, when requests to the URL path "/frontend" are made, the traffic is directed towards "front-line-service," and when requests are made to "/backend," they are handled by "back-line-service". This efficient routing strategy ensures each essential service gets appropriate interaction.

In summary, entry doorway routers are crucial to managing data flow, offering real-time data monitoring, equitable data distribution, advanced security protocols, and potential cost reductions. For a productive Kubernetes system, the understanding and strategic usage of entry doorway routers is a must for superior network management and expansion.

Defining Ingress Controller: Breaking Down the Terminology

Navigating the labyrinthine path of network protocols, the term "Ingress Controller" frequently presents itself. Its practical significance, however, may remain masked beneath layers of technical jargon. To gain a complete understanding, we must dissect this buzz term, shedding light on its real essence.

Decoding 'Ingress':

In the complex weave of interconnected networks, an 'Ingress' plays the role of the gatekeeper. It provides the passage, opening the way for external entities such as independent networks and the world wide web to gain access to a distinct network domain. Pairing it with powerhouse platforms like Kubernetes evolves the basic Ingress into an API module, crucial for managing external ingress points to the diverse data within a cluster.

Demystifying the 'Controller’:

The term 'Controller' in this scenario refers to a critical hub. This could either be physical hardware or meticulously crafted code designed to oversee data exchanges. Primarily, it steers the course of data flows. In the Kubernetes environment, a controller serves as the central pivot for control loops, safeguarding the API health of the cluster, and responding proactively to maintain balance within the system.

Fusing Components: The Ingress Controller

The fusion of these distinct parts culminates in the Ingress Controller. This technological instrument propels reverse proxy capabilities, offering an array of traffic direction and TLS termination alternatives within the Kubernetes framework. The Ingress Controller doubles as a network traffic maestro, guiding a Kubernetes cluster much like a conductor would guide a symphony. It seamlessly navigates inbound data flux based on custom guidelines, adopting a primary role in traffic control, especially in microservice domains where numerous services operate together and traffic regulation becomes paramount.

To help conceptualize the Ingress Controller's operations, consider the following illustration:

| Segment | Function |

|---|---|

| Ingress | Oversees external admission to cluster-based databases |

| Controller | Navigates the data transfer pathways |

| Ingress Controller | Directs copious data flow into a Big Data conglomerate |

In essence, Ingress Controllers are central to untangling network protocols. By monitoring and manipulating traffic, they enable secure, effective, and particularized deliveries to specific service ports. Gaining this insight is crucial for grasping network examination gears, particularly in a setting that centers around Kubernetes.

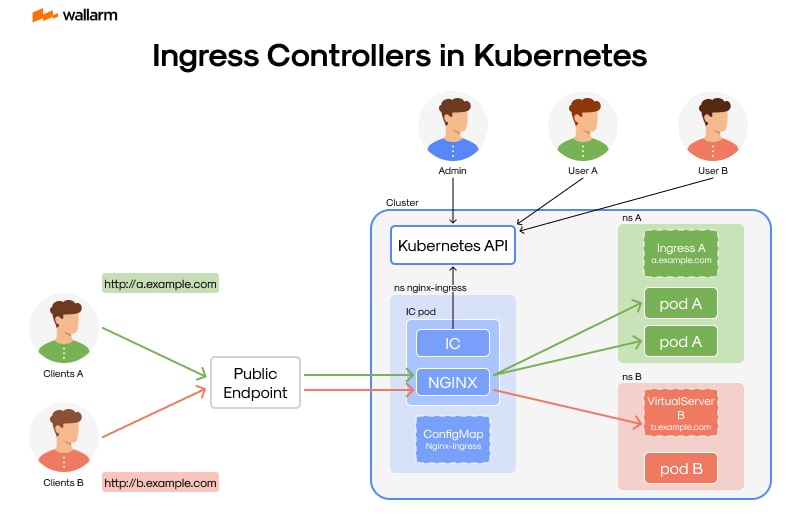

The Role of Ingress Controllers in Kubernetes

The topic of discussion is a pivotal figure in the Kubernetes arena known as the Gateway Patrol. This text seeks to shed light on this component's operations and the reason behind its significance in the Kubernetes world - an esteemed, open-source platform.

Digging Into the Workings of The Kubernetes Gateway Patrol

The Gateway Patrol finds its value in the Kubernetes cosmos, tasked mainly with decoding the doctrines laid out in the Gateway Instrument. In clearer terms, the Gateway Patrol's mission revolves around supervising the ingress of external network data towards the cluster's services, in line with the Gateway Instrument's stipulated laws.

Beyond that, the Gateway Patrol takes up the important role of overseeing the execution of the SSL/TLS mechanism - a bedrock for virtual transaction security. It cracks the code of incoming requests and codes outbound responses, thereby securing the correspondence between the service provider and the customer.

The Key Role of Gateway Patrols in Kubernetes

Gateway Patrols have an important function in the realm of Kubernetes as they undertake manifold responsibilities:

- Load Management: They mastermind the flow of incoming network data across pods, stopping an individual pod from getting swamped, thereby promoting high performance and reliability within the cluster.

- Communication Security: Gateway Patrols are entrusted with the management of the SSL/TLS termination process, a critical component of secure web communications.

- Uniform Resource Locator (URL) Allocation: They are in-charge of designating distinctive URL paths to various services in the cluster. For instance, /sectionA may be directed to Section A in the cluster, while /sectionB goes to Section B.

- Domain-Specific Routing: Gateway Patrols enable services in distinct domains to be guided towards specific services present within the cluster, promoting easy domain-oriented navigation.

The Modus Operandi of Gateway Patrols in Kubernetes

Operating within Kubernetes, a Gateway Patrol diligently keeps an eye on the Gateway Instrument for any shifts or adjustments. They respond promptly to such revisions by reconfiguring themselves in line with the updated routing rules.

Here's an illustrative example showcasing the mechanics of a Gateway Patrol in the Kubernetes setup:

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: tutorial-patrol

spec:

rules:

- host: homepage.mysite.com

http:

paths:

- pathType: Prefix

path: "/sectionA"

backend:

service:

name: service-A

port:

number: 80

In this case, all traffic headed towards homepage.mysite.com/sectionA will be guided towards service-A present inside the Kubernetes cluster.

To sum up, Gateway Patrols are an indispensable tool for Kubernetes, acting as traffic controllers for services clustered within. They are entrusted with critical duties like data traffic management, execution of SSL/TLS terminations, and enabling URL-based and domain-specific routing. Their contribution towards the smooth functioning of a Kubernetes setup is monumental.

Recognizing the Functions and Features of an Ingress Controller

As the lifeblood of a Kubernetes system, Structures called Gateway Supervisors are noteworthy due to the critical functions they perform. In-depth knowledge of these components can amplify the administration capabilities of a Kubernetes framework, forging a path for efficient operation of pertinent applications.

Functions Executed by a Gateway Supervisor

In the heart of a Kubernetes complex, the Gateway Supervisor plays a crucial role by supervising the inflow of network traffic into Kubernetes procedures. It fulfills this role through the translation of Gateway Protocols – unique Kubernetes units that carve out pathways for HTTP and HTTPS traffic directed towards your procedures.

- Traffic Direction: The Gateway Supervisor holds the responsibility of diverting network traffic to the appropriate procedures based on parameters detecting URL paths and host, sequentially guided by the directions in the Gateway Protocols.

- Traffic Equilibrium: In addition to traffic direction, Gateway Supervisors make sure the network traffic is evenly spread across multiple pods, thereby avoiding the overload of any single pod. This step ensures steady and effective operations.

- SSL/TLS Decoding: Unraveling incoming SSL/TLS traffic is an essential function performed by a Gateway Supervisor. The decoded traffic becomes readily processable by your operations, augmenting efficiency while conserving resources.

- Access Regulation: Certain Gateway Supervisors are empowered with features for access control, giving you complete authority over access to your procedures and effectively managing permissions.

Capabilities Introduced by a Gateway Supervisor

Gateway Supervisors offer a wide range of functionalities that go beyond the call of duty to enhance the efficiency and governance of your Kubernetes procedures.

- Configurable Traffic Routing Rules: With Gateway Supervisors, you get the flexibility of formulating custom routing rules that dictate your operations' network traffic based on an array of parameters, including URL paths and host.

- Procedure Health Check: Several Gateway Supervisors come with monitoring capabilities, constantly checking up on your procedures' health. They have the ability to redirect traffic if a procedure becomes unresponsive.

- Traffic Management: Some Gateway Supervisors are fortified with traffic management features to safeguard your operations from undue stress caused by excess network traffic.

- Inbuilt Web Application Firewall (WAF): Certain Gateway Supervisors come equipped with an integrated WAF for an extra layer of security for your operations.

- Service Mesh Compatibility: High-end Gateway Supervisors are compatible with service mesh frameworks like Istio or Linkerd, offering additional functions such as traffic taxonomy, circuit breakers, and mutual TLS.

To sum it up, a Gateway Supervisor is a critical part of a Kubernetes framework, that, when fully understood and utilized, can elevate the governance and efficiency of your Kubernetes procedures, ensuring applications run smoothly and successfully.

Why Does Your Business Need an Ingress Controller?

As the landscape of digital businesses broadens, a multitude of organizations turn toward employing cloud computing resources and modular services to deliver their offerings. Tapping into this technological shift, orchestration solutions like Kubernetes have become increasingly prevalent. However, steering the rush of traffic across these microservices is no simple task. The solution lies within deploying an Ingress Controller.

Traffic Supervision: A Crucial Necessity

In an environment where numerous services interact within a microservices infrastructure, establishing resilient communication pathways is imperative. Guiding and monitoring these connections maintain smooth operations while averting network congestion, service interruptions, and potential threats to security. Existing as a digital traffic guard, an Ingress Controller manages traffic flow and ensures that requests are channeled correctly to the right services.

Elevating Protection Levels

Undeniably, an essential feature of the Ingress Controller is enhancing the safety of your digital applications. It insists on SSL/TLS encryption protocols, promising secure information transfer between your consumers and your offerings. Moreover, it sets up authentication and permission systems, establishing an additional safeguard for your applications.

Distributing Workloads

In a bustling system, not all services encounter the same quantity of requests. Some may deal with a larger inbound flow. The Ingress Controller comes to rescue by evenly spreading the workload among all the services, safeguarding that no single service is overburdened with a surfeit of requests. This equilibrium boosts the efficiency and reliability of your applications.

Streamlining Service Discovery

Servicing in a microservices realm means services come and go dynamically. Keeping track of all the active ones might not always be a cakewalk. The Ingress Controller steps in here by proposing a unified access point for all services, enabling effortless recognition and connection of them.

Cutting Back On Operational Expenses

Installing an Ingress Controller not only smoothens traffic management and amplifies security but also advances performance. This leads to noticeable monetary savings on operations. It reduces dependency on additional hardware, trims operational costs, and lessens the likelihood of costly security infringements.

Here's an illustrative comparison:

| Without Ingress Controller | With a Functional Ingress Controller |

|---|---|

| Chaotic traffic coordination | Simplified traffic workflow |

| Potential exposure to security risks | Enhanced security with SSL/TLS encryption and access regulations |

| Disproportionate load distribution | Fair spread of workload |

| Difficulties in locating services | Uncomplicated service discovery |

| Higher operational outlay | Cost-effective operations |

In conclusion, an Ingress Controller plays a vital role in a Kubernetes setup. It organizes traffic movement, amplifies security measures, improves performance indicators, and leads to remarkable savings in operations. Therefore, for a business harnessing a microservices infrastructure, deploying an Ingress Controller isn't merely advantageous, but it's fundamentally vital.

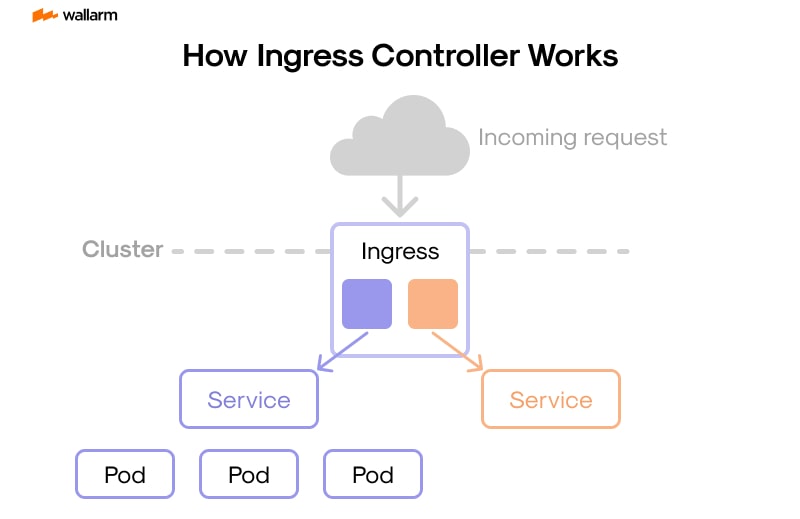

Understanding How Ingress Controller Works

In the vast sphere of Kubernetes, specific orchestration tools referred to as Ingress Controllers carry substantial importance. Their primary mandate involves directing external traffic towards diverse applications and solutions housed within a Kubernetes cluster. This is executed through HTTP and HTTPS pathways, contingent on the host's needs or the request's pathway. In an analogy, consider them akin to traffic directors regulating the inflow of data into the cluster.

Examining the Underlying Operations of an Ingress Controller

- Acknowledging the Request: External HTTP/HTTPS inquiries, often from end-users seeking to utilize services ensconced within the Kubernetes system, trigger the process. The controller catches wind of this demand.

- Interpreting the Request: Next, the request is forwarded internally. The reference point for this comes from the predetermined directives tucked within the Ingress Configurations. This component, exclusive to Kubernetes, plays protector to all the necessary routing sequences designed with the orchestrator in mind.

- Appraisal and Delegation: The Ingress Controller plays the role of a seasoned detective, quickly zeroing in on the rightful recipient of the demand inside its architecture. Once it's recognized, the request is steered towards the appropriate service housed within the Kubernetes cluster.

- Crafting a Response: The service, having processed the user's request, provides a reply. The controller acquires the response and fires it back to the demand's originator.

The Integral Role of Ingress Configurations

Ingress Configurations serve as a pivotal point in supporting an Ingress Controller’s operations. They provide a repository of instructions vital for the routing of inbound traffic. There are, broadly, three aspects of this configuration:

- Host: It points to the domain name entailed in the request like 'xyz.com'.

- Path: It signifies the URL pathway incorporated into the demand, e.g., '/home'.

- Backend: It throws light on the service inside the Kubernetes cluster that has the task of managing the request.

Here's a sample Ingress Configuration:

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: sample-config

spec:

rules:

- host: "xyz.com"

http:

paths:

- pathType: Prefix

path: "/home"

backend:

service:

name: home-service

port:

number: 8080

In this prototype, all requests to 'xyz.com/home' will find themselves directed to the 'home-service,' which operates on port 8080.

Pivotal Nature of Ingress Controllers

The Ingress Controller performs its tasks as the enforcer of the rules outlined in the Ingress Configurations. Its nature is vigilant constantly monitoring the Kubernetes API for potential modifications in the Ingress Configurations, and responsively fine-tuning its settings as needed.

There is a wide array of Ingress Controllers available, each with their unique advantages and features. Nginx, Traefik, and HAproxy are among the top picks.

Despite the diversity amongst these Controllers, their central tenet remains unchanged: receiving, routing, transmitting, and wrapping up requests guided by the directives hoarded in the Ingress Configurations.

In essence, the Ingress Controller shines in its role as the faithful guardian monitoring external ingress to services tucked within a Kubernetes cluster. Having a firm grasp of its overall functioning can boost traffic handling and guarantee the smooth, ceaseless operation of your applications.

A Deep Dive into the Deployment of Ingress Controllers

Evolving a functional and effective Kubernetes framework requires an astute choice of an Access Governor, an essential module integral in governing the output of applications dependent on containers. The ownership of this exceptional apparatus intensifies the sturdiness and performance of your platform. This piece comprehends the fundamentals of implementing an Access Governor, reviews its primary roles, and appraises alternative options.

Achieving the Ideal Equilibrium

An exemplary Access Governor flourishes optimally within an intentionally engineered Kubernetes milieu. This setting includes a participatory node, supplemented by a directorial node. Also essential is a proficient squad and a correctly configured kubectl tool that executes directives.

Constructing a Solid Traffic Guidance System

- Identifying the Optimum Access Governor: The initial phase entails examining multiple Access Governors, recognizing their unique capabilities and the clientele they serve. Renowned picks comprise NGINX, Traefik, and HAProxy. You should lean towards a component that aligns with your operational needs and discovers untapped capabilities in your application.

- Incorporating the Selected Governor: Having chosen an Access Governor that fulfill your prerequisites, the consequent step is its integration into your Kubernetes deployment plan. This stage necessitates the fabrication of a YAML configuration file, applicable through the kubectl apply command. This document assembles all fundamental attributes required by the Governor, encompassing tasks, amenities, and Access routes.

- Customizing Your Access Governor: Post successful immersion of the component into the framework, it's imperative to remold your arrangement to comply with your application's network traffic needs. This might necessitate formulating Access directives to guide incoming data.

- Assessing the Governor’s Efficacy: The final step verifies if the Access Governor indicated the traffic effectively towards your application. You assert this by transmitting requests to the Governor's public IP and authenticating that the responses correlate with the relevant software.

Enhancing the Procedure

- Allocating Explicit Namespaces: Assigning a distinctive namespace for the Access Governor, independent from other cluster resources, simplifies administration.

- Performing Routine System Evaluations: Regular system evaluations can detect and correct possible glitches promptly, thus ensuring that your Access Governor functions smoothly.

- Upholding Security Protocols: Upholding security precautions like TSL encryption and periodic certificate updates increases the credibility of the Access Governor and correlated software.

- Sustaining Continuous Supervision of Governor Activities: Constant monitoring of the Access Governor’s actions might reveal vital hints and early warnings regarding possible issues.

Substitutes for the Access Governor

| Traffic Moderator | Advantages | Disadvantages |

|---|---|---|

| NGINX | Celebrated for versatility | Tricky installation |

| Traefik | Simple set-up and automated HTTPS | Limited application range |

| HAProxy | Highly resilient with a multitude of features | May be overly complicated for basic requirements |

Establishing an Access Governor entails not only pinpointing the apt component but also amalgamating, altering it, and evaluating its effectiveness. This comprehensive manual will assist you in effectively administering an Access Governor, thus fortifying network traffic circulation within your Kubernetes system.

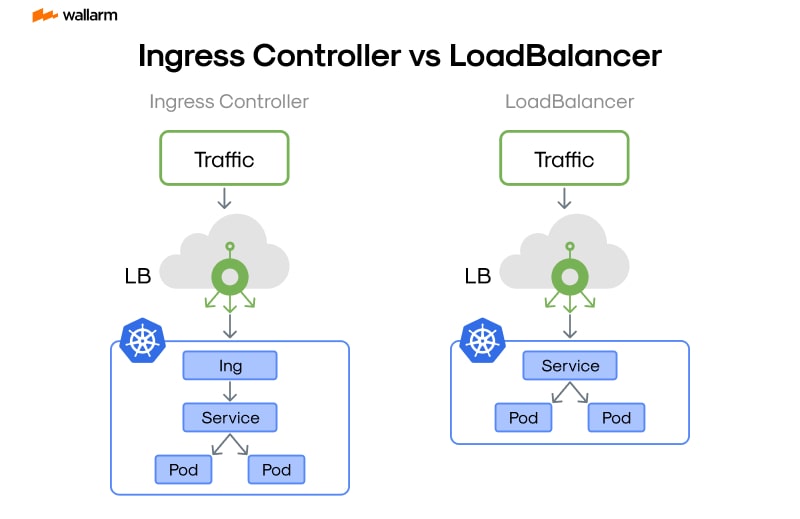

Ingress Controller vs. Service Type LoadBalancer: A Comparative Study

Navigating the labyrinth of cyber journey necessitates understanding two vital elements often found in industry lingo: "Service Type LoadBalancer" and "Ingress Controller". Each plays critical roles but differs in functionality. We're about to embark on a detailed exploration of their distinguished characteristics and comparative similarities.

Decoding the Intricacies of Service Type LoadBalancer

Our exploration begins with a dive into the ocean of "Service Type LoadBalancer". In the realm of Kubernetes, Services serve as abstractions that depict logical clusters of Pods coupled with the ruling access policy. Pods coming under the aegis of a Service are cherry-picked by a specific 'selector.'

Service creation bequeaths it with a "phantom IP," which navigates towards the suitable Pods, regardless of their physical location. This detachment from real Pods enhances resilience and flexibility.

So, the Service Type LoadBalancer can be viewed as a specific version of Service that amplifies the Service's reach beyond its borders using the LoadBalancer bestowed by a cloud vendor. It assigns a singular IP for funneling traffic to the relevant Pods.

Deconstructing the Role of Ingress Controller

On a parallel note, an Ingress Controller can be likened to an advance software unit that generates reverse proxy, enables tailored traffic control, and impedes TLS for Kubernetes activities. It takes the mantle of enforcing the rules dictated by the Ingress Resource, a code of guidelines that dictate how exterior users can access the services running within a Kubernetes cluster.

Unveiling Core Disparities

We've broken down these phrases to their elementary levels, sharpening our focus on their distinguishing factors.

- Service accessibility: The Service Type LoadBalancer elevates every service individually, whilst an Ingress Controller consolidates navigation rules into a unit, showcasing multiple services using the same IP route.

- Financial Implications: Considering each LoadBalancer Service needs its exclusive LoadBalancer from the cloud vendor, it could puncture your budget balloon as service numbers escalate. In stark contrast, an Ingress Controller can navigate traffic for several services, thereby being a more cost-effective option.

- Versatility: In the traffic control precision contest, Ingress Controllers don the winner's crown with their capability to handle complex situations such as URL-based control, traffic bifurcation. LoadBalancer Services, although straightforward, lack this nimbleness.

- Security Parameters: Ingress Controllers notch up the security grade through additional features like SSL/TLS termination, user authentication. Comparatively, LoadBalancer Services fail to incorporate these features natively.

| Ingress Controller | Service Type LoadBalancer | |

|---|---|---|

| Service Revelation | Unifies multiple services under a single IP | Grants individual IP for all services |

| Fiscal outlook | Pocket-friendly | Costs may spiral with service multiplication |

| Flexibility | High (URL-oriented routing, traffic partition) | Limited |

| Security Add-ons | Offers enhanced security with extra features | Lacks inherent supplementary security features |

Summing Up

Ingress Controllers and Service Type LoadBalancers, though both pivotal in streamlining network traffic, are distinct in functionality, each with their unique advantages and shortcomings. Your choice between the two will be driven by specific requirements, complexity parameters, and budgetary constraints.

In the forthcoming section, we will delve into commonly leveraged Ingress Controllers, thus enriching your comprehension of the diverse possibilities you can explore in the market.

An Overview of Commonly Used Ingress Controllers

In the landscape of network supervision, a variety of traffic portals have ascended as potent and functional systems. These gateways bring to the table their unique characteristics and capabilities, making themselves a preferred pick for businesses and organizations striving to refine the management of network traffic. The discussion hereunder will explore some commonly deployed traffic portals, enveloping a comprehensive analysis of their distinct attributes, advantages, and potential setbacks.

NGINX Traffic Portal

NGINX, a well-regarded open-source software, presents a traffic portal that has gained prominence due to its robust nature and adaptability. It notably affords reliability, wide array of features, simple setup procedure and economical system demands.

- Attributes: NGINX Traffic Portal provides support for HTTP and HTTPS protocols, while also functioning as a bi-directional proxy and traffic allocator. It prides itself on SSL decryption, domain-oriented virtual hosting, and route planning set on specific paths.

- Advantages: The varied feature set of this traffic portal gives users the liberty and command over traffic processing. Added to that is the excellent functionality and unyielding reliability under high-load conditions.

- Setbacks: Despite its power and flexibility, NGINX might prove quite intricate for configuration and utilization, particularly for initiates.

Traefik Traffic Portal

Traefik, another open-source traffic portal, is celebrated for its simplicity and easy usability. It intuitively arrives at the best arrangement for your services and keeps pace with shifts in the infrastructure.

- Attributes: Similar to NGINX, Traefik stands in support of HTTP and HTTPS protocols, handling route arrangement based on paths and load dispersion. Additionally, it facilitates automated SSL certification creation courtesy of Let’s Encrypt.

- Advantages: The highlight of Traefik is its straightforwardness. It's simple to acquire, establish, and operate, making it fitting for moderately sized deployments.

- Setbacks: Despite its user-friendliness, Traefik may fall short on some of the sophisticated features and versatility that other traffic portals boast.

HAProxy Traffic Portal

HAProxy, a complimentary, open-source traffic portal, is respected for its superior functionality and consistency. Thanks to its expertise in load regulation and competent allocation of network traffic, it's a favored pick for high-traffic web portals.

- Attributes: In line with its counterparts, HAProxy extends support to HTTP and HTTPS protocols and present options for SSL closure, session perpetuation and WebSocket backing. It further avails comprehensive metrics and logs for surveillance and trouble resolution.

- Advantages: HAProxy stands out in the aspects of functionality and dependability. It can handle high volumes of traffic with minimal impact on performance.

- Setbacks: The setup and administration of HAProxy can be sophisticated, and it might not be as laden with features as other traffic portals.

Kubernetes Traffic Portal

Featured as an embedded solution, the Kubernetes Traffic Portal proffers basic load regulation and traffic routing for applications functioning on Kubernetes.

- Attributes: This portal endorses HTTP and HTTPS protocols, along with rudimentary route planning based on paths and load distribution abilities.

- Advantages: Being an embedded solution, Kubernetes Traffic Portal is easy to use and exempts the need for standalone installation. It also syncs flawlessly with other Kubernetes constituents.

- Setbacks: This embedded traffic portal is relatively basic and loses out on some advanced options provided by other portals. Its performance and scalability may not be on par with assorted alternatives.

In conclusion, the choice for a specific traffic portal largely depends on the unique demands of the deal. While some might appreciate the straightforwardness and easy usage offered by Traefik or Kubernetes Traffic Portal, others might prefer the advanced feature set and exceptional functionality of NGINX or HAProxy. Regardless, understanding the capabilities and limitations of each traffic portal is crucial to making a well-informed decision.

Examining the Security Measures in an Ingress Controller

Diving into the universe of cybersecurity, ingress operators serve a crucial function. They essentially act as the screening authority for your network, ensuring that only approved traffic makes its way into your system's core. This section aims to uncover the various security advancements applied at the ingress operator level, thus providing a detailed insight into how it aids protocols in maintaining a safe cyber ecosystem.

The Protective Mechanisms in Ingress Operators

Ingress operators are engineered with a number of security advancements in place, from credential verification and access permission, to data obscurement and traffic control.

- Credential Verification: The initial step in checking the credibility of a user or a system in the network requires verifying their credentials. To execute this, ingress operators have several verification processes at their disposal, like simple credential verification, token-based verification, and even certificate-backed verification.

- Access Permission: After cross-verification of the credentials, the ingress operator checks whether the validated signatory has the necessary clearances to retrieve the requested resources.

- Data Obscurement: Ingress operators utilize SSL/TLS encryption methodologies to safeguard the data while in transit, acting as a barrier against unauthorized entities.

- Traffic Control: At the root of security measures like traffic control is an effort to regulate the frequency of requests processed by the ingress controller, hence serving as a strong wall against potential Denial-of-Service (DoS) attacks.

Dissecting: Credential Verification and Access Permission

Verification of credentials and allotment of permissions serve as the primary bulwark in an ingress operator, blocking malicious users and systems from gaining access to the network resources.

- Simple Credential Verification: This is a fundamental verification method where users merely need to provide a valid username and password. The secure transmission of this information over the network is ensured by encoding the credentials, bundled in the HTTP header.

- Token-backed Verification: In this process, upon successful initial validation, the user is issued a token for subsequent requests, thus negating the need to send across the username and password with each request.

- Certificate-backed Verification: A higher level of verification is offered with this method, wherein a certificate verified by a trusted Certificate Authority (CA) acts as the proof of authentication while accessing the resources.

Obscuring Data During Transition

Another layer of protection added by the ingress operator is data obscurement, wherein SSL/TLS encryptions are employed to secure data as it moves across the network. This method ensures that any intercepted data remains unreadable without the corresponding decryption key.

Traffic Regulation for Warding off DoS Attacks

Traffic regulation serves as an effective preventative against DoS attacks by controlling the rate of acceptable requests processed by the ingress operator. If the request frequency breaches the established limit, the extra requests are placed in queue or rejected outright, thus barring system overload.

Various Data Safety Policies Implemented by the Ingress Operators

In addition to the aforementioned measures, ingress operators are also equipped with certain safety policies like IP whitelisting, blacklisting, and Cross-Origin Resource Sharing (CORS) implementations. They add an extra level of security by dictating who gets to access the network and their subsequent actions.

- IP Whitelisting: This policy grants access to only select IP addresses that have been issued a pass via the whitelist.

- IP Blacklisting: This list works conversely by denying access to blacklisted IP addresses.

- CORS Implementations: CORS measures dictate how resources from an external domain on a webpage can be accessed.

Summing up, ingress operators incorporate various methods to protect and maintain the integrity, confidentiality, and accessibility of network resources. A thorough understanding of these protective measures is instrumental in fortifying your network and data infrastructure against any potential threats.

Solving Networking Problems with Ingress Controllers

In the world of networking, problems are inevitable. They can range from simple connectivity issues to complex routing problems. However, with the right tools and knowledge, these problems can be solved effectively. One such tool that has proven to be a game-changer in the networking landscape is the Ingress Controller. This chapter will delve into how Ingress Controllers can be used to solve various networking problems.

Understanding the Networking Problems

Before we delve into how Ingress Controllers solve networking problems, it's important to understand the common networking issues that businesses often face. These include:

- Load Balancing: This involves distributing network traffic across several servers to ensure that no single server bears too much load. Without effective load balancing, some servers may become overwhelmed, leading to poor performance and potential downtime.

- Service Discovery: In a microservices architecture, services need to discover and communicate with each other. Without an effective service discovery mechanism, this communication can become complex and inefficient.

- Traffic Routing: This involves directing client requests to the appropriate services. Without effective traffic routing, client requests may end up at the wrong service, leading to poor user experience.

- Security: Protecting the network and its services from malicious attacks is crucial. Without proper security measures, the network may become vulnerable to a variety of threats.

The Role of Ingress Controllers in Solving Networking Problems

Ingress Controllers play a crucial role in addressing these networking problems. Here's how:

- Load Balancing: Ingress Controllers effectively distribute incoming traffic to different backend services based on various algorithms. This ensures that no single service is overwhelmed with too much traffic.

- Service Discovery: Ingress Controllers use service discovery mechanisms to identify the available services and their locations. This simplifies the communication between services in a microservices architecture.

- Traffic Routing: Ingress Controllers use rules to route incoming requests to the appropriate services. This ensures that every client request reaches the right service.

- Security: Ingress Controllers provide various security features, such as SSL/TLS termination, authentication, and rate limiting, to protect the network and its services.

A Closer Look at How Ingress Controllers Solve Networking Problems

Let's take a closer look at how Ingress Controllers solve these networking problems.

Load Balancing

Ingress Controllers use various load balancing algorithms, such as round robin, least connections, and IP hash, to distribute incoming traffic. Here's a simple example of how an Ingress Controller performs load balancing:

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: example-ingress

spec:

rules:

- host: www.example.com

http:

paths:

- pathType: Prefix

path: "/"

backend:

service:

name: example-service

port:

number: 80

In this example, the Ingress Controller distributes incoming traffic to the example-service service.

Service Discovery

Ingress Controllers use service discovery mechanisms to identify the available services and their locations. This is done by querying the Kubernetes API for Services and Endpoints. Here's a simple example of how an Ingress Controller performs service discovery:

apiVersion: v1

kind: Service

metadata:

name: example-service

spec:

selector:

app: example-app

ports:

- protocol: TCP

port: 80

targetPort: 9376

In this example, the Ingress Controller discovers the example-service service and its location (port 9376).

Traffic Routing

Ingress Controllers use rules to route incoming requests to the appropriate services. These rules are defined in the Ingress resource. Here's a simple example of how an Ingress Controller performs traffic routing:

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: example-ingress

spec:

rules:

- host: www.example.com

http:

paths:

- pathType: Prefix

path: "/app1"

backend:

service:

name: app1-service

port:

number: 80

- pathType: Prefix

path: "/app2"

backend:

service:

name: app2-service

port:

number: 80

In this example, the Ingress Controller routes incoming requests for www.example.com/app1 to the app1-service service and requests for www.example.com/app2 to the app2-service service.

Security

Ingress Controllers provide various security features to protect the network and its services. For example, they can terminate SSL/TLS connections, authenticate users, and limit the rate of requests. Here's a simple example of how an Ingress Controller provides security:

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: example-ingress

annotations:

nginx.ingress.kubernetes.io/auth-type: basic

nginx.ingress.kubernetes.io/auth-secret: basic-auth

spec:

rules:

- host: www.example.com

http:

paths:

- pathType: Prefix

path: "/"

backend:

service:

name: example-service

port:

number: 80

In this example, the Ingress Controller authenticates users using basic authentication before allowing them to access the example-service service.

In conclusion, Ingress Controllers play a crucial role in solving networking problems. By providing load balancing, service discovery, traffic routing, and security, they ensure that the network operates efficiently and securely.

Steps Towards Installing Ingress Controller on Kubernetes

This guide will walk you through the vital steps needed to execute an Ingress Manager setup in a Kubernetes environment. Having a firm understanding of Kubernetes and its core operations is a requisite to ensure a seamless setup. Here, we'll delve into four crucial stages to effectively set up an Ingress Manager, devised to mesh well with your distinct Kubernetes operations.

Chapter One: Structuring and Personalizing your Kubernetes Environment

Kickstart your Ingress Manager setup by crafting a sturdy Kubernetes environment. Whether you’re setting up a local infrastructure for tinkering and refinements, or sketching out a cloud-based architecture for real-world deployment, the first step remains the same.

The process of carving out a Kubernetes environment is made easy with utility tools. Minikube comes to rescue for local testing, paired with unique tools designed to cater to diverse cloud platforms. Google Kubernetes Engine (GKE) merges seamlessly with Google Cloud, Amazon Elastic Kubernetes Service (EKS) works symbiotically with Amazon Web Services, while Azure Kubernetes Service (AKS) is customized to meet Azure’s requirements.

Chapter Two: Integrating an Ingress Manager

Once your Kubernetes environment is ready, the next course of action is to introduce an Ingress Manager. Although Nginx, Traefik, and HAProxy are all trustworthy choices, for the purpose of this guide, we will walk you through the process with Nginx as the selected example.

You can effortlessly integrate Nginx Ingress Manager by utilizing Helm - a package management tool engineered particularly for Kubernetes. Here is the simplified Helm command to kickstart your Nginx Ingress Manager:

helm install custom-nginx stable/nginx-ingress --set rbac.create=true

This command associates the Nginx Ingress Manager with the designated tag 'custom-nginx' and initiates the Role-Based Access Control (RBAC) configuration.

Chapter Three: Verifying the Setup

Upon completion of installation, it's crucial to assure the smooth functioning of all the Ingress Manager components. Evaluate the operational state of the pods in your Kubernetes workings by executing the following command:

kubectl get pods -n default

Executing this command will provide you with a snapshot of the active Nginx Ingress Manager pod and a summary of all pods.

Chapter Four: Setting up the Ingress Resource

The last step necessitates the formation and assignment of the Ingress Resource. This resource comprises set rules responsible for managing traffic across various services within your Kubernetes environment.

The following instructions detail how to craft your Ingress Resource:

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: custom-ingress

spec:

rules:

- host: www.custom.com

http:

paths:

- pathType: Prefix

path: "/"

backend:

service:

name: custom-service

port:

number: 80

This configuration channels web traffic initiated at 'www.custom.com' towards the 'custom-service' operating on port 80.

Finally, a successful deployment of an Ingress Manager in a Kubernetes environment is realized by diligently adhering to these stages. Always validate the correctness and efficiency of all implementations and modifications to ensure a proficient setup that aligns with your strategies.

Workflows Simplified: Advancement with Ingress Controllers

The tech environment has matured with the emergence of sophisticated mechanisms like Navigator Guides — a revolutionary tool that brings a twist to the traditional methods in network navigation, resulting in heightened efficiencies and lower chances of errors. This guide will elaborate on these enhancements introduced by the incorporation of Navigator Guides.

Understanding Old Processes

The enormous modifications brought about by Navigator Guides can be better grasped by delving into the history of network navigation. In the past, workers had to manually calibrate load balancers and routers, an onerous task with a high susceptibility to human errors. Older means of managing network flow were strictly one-dimensional and lacked the ability to adjust or augment.

The Arrival of Navigator Guides

Network navigation witnessed an impressive transformation with the emergence of Navigator Guides. Their primary role is to automate the administration of incoming network traffic, which decreases the over-reliance on human involvement. This automation translates to highly efficient processes that are remarkably error-free.

Streamlining Operations with Navigator Guides

Navigator Guides bring significant changes to usual processes in a number of ways. Let's examine them:

- Autonomous Traffic Management: Navigator Guides operate on predefined rules, managing incoming network traffic without any human effort. This eliminates manual mistakes and enhances the overall flow of operations.

- Expansion Capabilities: One significant aspect of Navigator Guides is the easy handling of variable traffic without alterations in the existing setup, making these tools highly adaptable for businesses.

- Flexible Customization: Navigator Guides' offer the chance to develop distinctive rules for administering network flow, allowing businesses to modify procedures to fit unique needs.

- Enhanced Security: The system is bolstered with features like SSL termination and validation by Navigator Guides, considerably strengthening the security landscape.

Comparing Procedures

| Characteristic | Traditional Processes | Navigator Guides-Centric Processes |

|---|---|---|

| Traffic Management | Requires Human effort | Automated |

| Expansion Ability | Limited | Extensive |

| Adaptability | Minimal | All-embracing |

| Security Enhancement | Basic | Superior |

Business Functions’ Implications

The advanced and simplified operations brought upon by Navigator Guides have broad positive correlations to businesses. Corporations have the liberty to control their network traffic with a higher degree of efficiency, resulting in continuous operation. Navigator Guides also offer expansion capabilities, and the advanced security features help in warding off potential threats.

In conclusion, Navigator Guides have brought a significant transformation in the way network navigation is perceived by making operations efficient, agile, and safe. They've set a new standard in enhancing business; this crucial role will only become more significant as more companies embrace and adjust to the age of digital revolution.

Exploring Case Studies: Successful Applications of Ingress Controllers

Delve deeper into the wonders of Kubernetes Ingress Controllers, and appreciate their pivotal function in channeling network correspondences. These power-packed features are laudably adaptive and highly efficient, brilliantly embracing various complex scenarios:

Scenario 1: Optimizing Client Interaction for an Ascending Online Retail Business

A burgeoning digital storefront grappled with the challenge of effortlessly steering their website interactions across multiple services—including user authentication, product listing, shopping cart functionality, and payment processing. Each service existed in isolation on diverse servers, making network coordination a daunting hurdle to overcome.

The answer to their woes came in the form of Kubernetes' Ingress Controller, which helped in enacting precise URL mapping guidelines. This transitioned a previous complicated process into a streamlined method, uplifting the website's functionality stunningly.

What's more, the Ingress Controller fortified the platform's defenses by supplying SSL termination, negating the necessity for individual SSL protections for each service.

Scenario 2: Reorienting Communication Channels for a Flourishing Digital Content Dissemination Platform

A rapidly growing content streaming platform was met with the obstacle of revamping their system to cater to their swelling consumer base. The initial monolithic architecture only multiplied in complexity as the size increased, turning cumbersome and highly resistant to alterations.

The antidote was to decompose the monolithic framework into more digestible, self-contained microservices managed by Kubernetes. However, directing traffic to these freshly minted microservices was an obstacle in itself.

The Ingress Controller came to the rescue once again. Defining the network navigation principles via the Ingress Controller enabled the efficient scaling of services, thus making room for a larger audience.

An additional user benefit realized through the use of the Ingress Controller was its load-balancing capacity, which evenly distributed workload across all the microservices, thus enhancing speed and performance.

Scenario 3: Governing Data Interchange for a Sophisticated Finance Entity

A renowned finance enterprise with an intricate network configuration found it difficult to administer and safeguard their data interchange. Each of their operations posed distinct security predicaments, worsening their dilemma.

Here, the Ingress Controller steered the company out of their predicament. The detailed traffic guidance rules were set in place with the help of the impressive features of the Ingress Controller.

The Ingress Controller's outstanding offering—SSL termination and validation—was a significant factor in upscaling their data interchange security.

Furthermore, the rate-limiting capability of the Ingress Controller provided an added layer of protection against potential DDoS attacks.

The above instances make it ample clear that Ingress Controllers are truly a jack-of-all-trades when it comes to directing network traffic and securing applications. They have proven their mettle, be it in the digital retail landscape, content distribution networks, or state-of-the-art finance organizations. Kubernetes Ingress Controllers continue to leave their footprints in the realm of network routing.

FAQs about Ingress Controllers: Addressing Common Queries

Pivotal Roles and Strategic Methods of Network Traffic Arbitrators in Cluster Administration Software: Best Practices for Efficient Utilization

Within the realm of Cluster Administration Software, such as Kubernetes, Network Traffic Arbitrators perform an indispensable role. They function as pivotal conduits linking in-place services and external communication platforms. These units exist ubiquitously in Kubernetes, enabling flawless HTTP and HTTPS interchange and skillfully coordinating multiple transactions in coherent adherence to gateway norms.

Understanding the Functionality of Network Traffic Arbitrators

These entities act as vigilant keepers, sustaining a balance within the dynamism of gateway routes in the Kubernetes API. Effortlessly adapting to diverse paradigms, these entities reroute information channels to remain in tune with these nuances, thereby augmenting service engagement distinctly.

Puzzle: Network Traffic Arbitrators vs Load Balancer

While Network Traffic Arbitrators and Load Balancers both manage information navigation within their respective roles, they adopt different techniques. Load Balancers attempt to evenly allocate network traffic across multiple servers, circumventing any potential traffic congestion. Conversely, a Network Traffic Arbitrator determines a specific route, channeling data towards designated services, in harmony with Gateway edicts.

| Network Traffic Arbitrators | Load Balancers |

|---|---|

| Conforms to Gateway Edicts | Strives for equal network traffic circulation |

| Operates solely within a Cluster Administration context | Functions in a versatile network setting |

| Encourages intra-cluster communication | Modulates server load |

Advantages of Deploying Network Traffic Arbitrators

Deploying Network Traffic Arbitrators within your system architecture enhances control over bi-directional links between external communication avenues and internal services. Overlooking their role could result in a scenario, where distinct Load Balancers are required for each service, therefore heightening resource consumption and inflating operational expenditure. Alternately, Network Traffic Arbitrators provide substantial benefits such as efficient traffic governance, uncomplicated SSL termination, and the capability to manage multiple virtual host domains.

Designating Network Traffic Arbitrators

The procedure for appointing Network Traffic Arbitrators varies based on the chosen paradigm. A typical initiation point is to organize the Network Traffic Arbitrator layout, then formulate a Gateway directive that governs traffic distribution. Detailed instructions for successful implementation are usually provided in the user manuals by Network Traffic Arbitrator creators.

Top-notch Network Traffic Arbitrators in the Market

There exists an abundance of Network Traffic Arbitrators, each offering specialized features and capabilities. Frequently preferred choices include NGINX Network Traffic Arbitrator, Traefik, HAProxy Gateway, and Istio.

Enhancing Your Network Traffic Arbitrators' Safeguard

To enhance your Gateway Manager's defensive mechanisms, implement SSL/TLS for secure pathways, devise network edicts for controlling information movement, and incorporate verification and access control systems. Assiduous surveillance and recurrent system upgrades are critical to deter potential security vulnerabilities.

In conclusion, acquiring an understanding of Network Traffic Arbitrators' roles and strategic approaches can substantially improve your data management efficacy within a Kubernetes-like framework. The aim of this article is to impart knowledge about the crucial roles and how to implement Network Traffic Arbitrators successfully.

Top Ingress Controller Providers in the Market Today

In the ever-advancing sphere of network routing and Kubernetes, various trailblazing enterprises have risen to the forefront as principal suppliers of Ingress Controllers. The varied array of utilizes they offer, each punctuated by its exceptional characteristics, advantages, and possible caveats, is worth exploring.

Distinguished Ingress Controller Suppliers

The NGINX Solution

Bestowed with accolades in the domain of open-source applications, NGINX is renowned for its widely-adopted Ingress Controller, a staple in numerous Kubernetes operations. This supply hinges on the respected NGINX reverse surrogate and load distributor, cherished for its top-tier performance and unwavering steadiness.

The NGINX solution encompasses an array of traffic administration tasks such as route based content, session durability, and SSL/TLS termination. Plus, it comes loaded with superior security features like rate restriction, JWT authentication, and access control based on IP.

The High-Speed Alternative: HAProxy

HAProxy, another top-tier Ingress Controller supplier, is hailed for its lightning-speed data processing acumen. For entities that rely on instant applications and microservices, HAProxy emerges as the top pick.

The HAProxy solution, unique in its superior traffic administration capabilities, supports routing based on host and path, persistent sessions, and advanced load balancing mechanisms. Furthermore, it wields solid security features including DDoS prevention, HTTP/2 support, and SSL/TLS offloading.

Simplicity with Traefik

Traefik, a contemporary HTTP reverse proxy and load distributor, prides itself on its simple and intuitive use. This significant facet makes its Ingress Controller a favored choice for enterprises venturing into Kubernetes for the first time.

Traefik's solution supports an extensive list of protocols including HTTPS, HTTP, and gRPC. Added bonuses are the automatic discovery of services, dynamic alterations to configurations, and built-in service spotting. Regardless, it could do better on certain advanced safety and traffic administration features.

Kong's Extensibility

Kong, a distributed Microservice Abstraction Layer (also called an API Gateway or API Middleware) that is scalable, cloud-native, and swift, provides an Ingress Controller with the aim to secure, control, and extend Kubernetes operations.

Kong's solution supports an extensive suite of plug-ins, offering businesses the ability to integrate features such as logging, transformations, rate regulation, and authentication. Furthermore, it is suitable for a broad range of applications, thanks to its support for WebSocket, gRPC, and TCP traffic.

Integrate with AWS Application Load Balancer

The AWS Application Load Balancer Ingress Controller shines as the obvious selection for enterprises operating on Amazon Web Services (AWS). This offers seamless integration with other AWS systems and enables utilization of the superior features of the AWS Application Load Balancer.

This AWS provision supports routing based on host and path, WebSocket and HTTP/2 traffic, plus SSL/TLS termination. Moreover, it includes unique traffic administration features like gradual start, load balancing algorithms, and determination.

In summation, deciding on an Ingress Controller supplier should be guided by a fusion of elements including your enterprise-specific requirements, the intricacy of your Kubernetes setup, and your financial projections. Comprehending each provider's strong suits and shortcomings is the stepping stone to a decision that best mirrors your needs.

Making Future Projections: The Evolution of Ingress Controllers

In the forthcoming years, a significant transformation is destined in the way Ingress Controllers handle application network traffic. Rapidly rising dependency on applications native to the cloud, coupled with the spiralling intricacy of microservices frameworks, underscores the necessity for exceedingly nuanced and versatile traffic organization solutions.

Intelligent Tuning – the new direction

In the initial phases, Ingress Controllers were predominantly assigned with the task of directing traffic from the public network to pertinent back-end offerings, in line with the URL of the request. But as the intricacies of applications magnified and became distributed, the call for smarter tuning became apparent.

Expected enhancements in Ingress Controllers include their capacity to determine routing based on multiple factors like the load on back-end services, the geographical location of the user, or even the specifics of the very request, offering a more efficacious use of resources and enhancing end-user performance.

Augmented Protection Aspects

The frequent evolution of cyber threats necessitates the escalation of the security aspects incorporated in Ingress Controllers. Ensuing versions are projected to comprise of more sophisticated security steps, such as all-inclusive web app firewalls, automated breach detection and responsive abilities.

Such advancements are aimed at shielding applications from exterior threats while simultaneously identifying and lessening potential vulnerabilities intrinsic to the application, further consolidating the security stance of the overall system.

Harmonizing with Service Meshes

Service networks like Istio and Linkerd are witnessing increased use for handling inter-microservice communication. Concurrent to this trend, we foresee a stronger amalgamation of Ingress Controllers and service meshes.

This amalgamation paves the way for more nuanced traffic regulation abilities, such as incremental deployments and blue-green deployments, thereby diminishing the risk of rolling out new service versions.

Machine Learning Makes its Way

The sphere of machine learning is expected to have a profound impact on the progress of Ingress Controllers. By harnessing machine learning algorithms, advanced Ingress Controllers could be capable of forecasting traffic trends and automatically amending routing rules to enhance performance.

A case in hand for the utility of such development would be situations having unsteady and unpredictable traffic flows, such as during an e-commerce site's fleeting sales.

Wrapping Up

Ingress Controllers are destined for an exhilarating future, with numerous advancements envisioned. As application complexity escalates, the indispensability of Ingress Controllers in handling application network traffic is set to amplify. By keeping pace with this evolution, organizations can aim to fully leverage the advantages offered by these Ingress Controllers.

In the subsequent segment, we will delve deeper into some frequently asked questions about Ingress Controllers, offering lucid and succinct answers that help deepen your understanding of this crucial technology.

Subscribe for the latest news